Tap into the AI APIs of Google Chrome and Microsoft Edge

In a significant stride towards democratizing artificial intelligence, Google Chrome and Microsoft Edge are now integrating powerful, on-device AI capabilities directly into their browsing experiences. This innovation bypasses the need for cloud-based processing, allowing users to leverage sophisticated AI tasks such as text summarization, language translation, and content generation using models that run entirely on their local hardware. This development marks a pivotal moment in making advanced AI accessible and efficient for everyday web interactions.

The integration is facilitated by an experimental set of APIs, built upon the foundational Chromium project that underpins both browsers. This initiative signifies a broader trend in the tech industry: the increasing viability and power of locally hosted AI models. Traditionally, advanced AI inference required substantial computational resources, often necessitating powerful, dedicated hardware or reliance on cloud services. However, recent advancements have led to smaller, more efficient AI models that can perform complex tasks with remarkable accuracy, even on consumer-grade devices.

For developers and end-users alike, the challenge has been the infrastructure required to deploy and manage these local AI models. While applications like ComfyUI and LM Studio have emerged as solutions for running models locally, they often involve complex setup and ongoing maintenance. The new browser-based APIs offer a streamlined, integrated approach, embedding these AI functionalities directly within the web browsing environment. This means users can potentially summarize articles, translate web pages, or even generate text snippets without ever leaving their browser or requiring an internet connection for the AI processing itself.

The initial rollout of these features leverages distinct, yet comparably powerful, AI models. Google Chrome is utilizing its Gemini Nano model, a highly efficient on-device language model designed for mobile and desktop applications. Microsoft Edge, on the other hand, is employing Microsoft’s Phi-4-mini models, a family of small language models known for their strong performance relative to their size. While both browsers are built on the same underlying Chromium project and aim to standardize these AI capabilities, their current implementations and supported functionalities may vary.

A New Era of In-Browser AI: Available APIs and Functionality

As of April 2024, both Chrome and Edge browsers are rolling out a suite of AI APIs, with some differences in immediate availability. The core functionalities are designed to be accessible directly within the browser’s JavaScript environment, enabling web developers to seamlessly incorporate AI features into their websites and applications.

The primary AI APIs currently available in Chrome and planned for Edge include:

- Summarizer API: This API allows for the generation of concise summaries of longer texts. Users can specify the desired length and type of summary, such as a teaser, a tl;dr (too long; didn’t read) version, a headline, or key points. This is particularly useful for quickly grasping the essence of articles, documents, or lengthy web pages.

- Translation API: This feature enables real-time translation of text between various languages. It promises to break down language barriers on the web, making global content more accessible to a wider audience.

- Language Detector API: This API automatically identifies the language of a given text. This is a foundational component for other language-based AI tasks, such as translation, and can also be used for content filtering or routing.

While the Summarizer and Translation APIs are available in both Chrome and Edge, the Language Detector API is currently exclusive to Chrome. However, Microsoft has indicated plans to integrate this functionality into Edge in the future.

Beyond these core offerings, both browsers are also experimenting with additional AI capabilities that require explicit opt-in from users. These more experimental APIs include:

- Text Generation API: This API allows for the creation of new text based on a given prompt. It can be used for a variety of creative and practical applications, such as drafting emails, writing creative content, or generating code snippets.

- Text Classification API: This API categorizes text into predefined classes. It can be used for tasks like sentiment analysis, spam detection, or topic modeling.

- Question Answering API: This API enables users to ask questions about a given text and receive relevant answers. It transforms static documents into interactive knowledge bases.

The long-term ambition behind these experimental APIs is to see them evolve into general web standards, allowing for consistent AI functionality across all web browsers. However, for the present, their implementation is specific to Chrome and Edge, representing a competitive move by both tech giants to lead in the integration of AI into the everyday digital experience.

Putting Local AI to the Test: The Summarizer API in Action

To illustrate the practical application of these new browser-based AI capabilities, we can examine the Summarizer API. This API serves as a clear example of how developers can integrate AI functionalities into web pages, and its usage patterns are representative of the other available APIs.

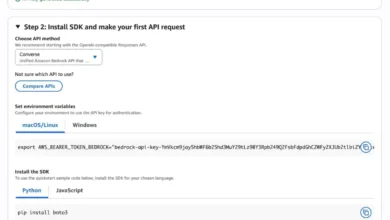

To utilize these APIs, a web page must be served through a local web server. This is a crucial requirement, as opening web pages directly as local files (file:// protocol) can trigger content restriction rules that may interfere with the proper functioning of these experimental APIs. A simple method for setting up a local server involves using Python. If Python is installed, navigating to the directory containing the index.html file in a terminal and executing the command py -m http.server will serve the contents on port 8080, making the web page accessible through http://localhost:8080.

The provided HTML structure for a summarization tool includes two primary textarea elements: one for inputting text and another for displaying the summarized output. Additional controls allow users to specify the type of summarization (e.g., "teaser," "tl;dr," "headline," "key-points") and the desired length of the summary ("short," "medium," "long"). A button labeled "Start" triggers the summarization process, and a div with the ID "log" is used to provide real-time feedback on the process.

The core logic resides within the script tag, specifically in the summarize() asynchronous function. This function orchestrates the entire summarization process, from checking API availability to displaying the results.

Step 1: Verifying API Availability

The initial and most critical step in the summarize() function is to confirm that the Summarizer API is indeed available within the browser’s global scope. This is achieved with the conditional statement: if (!'Summarizer' in self). If the API is not present, a message is logged to the user, and the function returns, preventing further execution.

Following this, the Summarizer.availability() method is called. This asynchronous call queries the browser to determine the status of the underlying AI model required for summarization. The returned status can indicate whether the model is ready for use, needs to be downloaded, or is unavailable for other reasons. This information is crucial for managing user expectations and providing appropriate feedback.

Step 2: Instantiating the Summarizer Object

Once the API’s availability is confirmed, the next step involves creating an instance of the Summarizer object. This is done using await Summarizer.create(...). The create method accepts a configuration object that allows developers to customize the summarization process:

sharedContext: This parameter can be used to provide additional context or instructions to the AI model, potentially influencing the nature or focus of the summary.type: As mentioned, this parameter specifies the desired format of the summary (e.g., "teaser," "headline").length: This parameter controls the desired length of the generated summary.format: The output format can be specified, with ‘markdown’ being an option here, allowing for rich text output.monitor: This parameter accepts a callback function that can be used to monitor various events during the model’s operation, such as download progress. Thedownloadprogressevent listener, for instance, provides updates on the percentage of the model that has been downloaded, offering valuable UI feedback to the user.

Step 3: Streaming and Iterating Over the Output

A key feature of modern AI models is their ability to generate output in a streaming fashion, providing results incrementally rather than waiting for the entire process to complete. This is particularly important for user experience, as it offers visual feedback that the AI is actively working. The Summarizer API supports this through the summarizeStreaming() method.

The line const stream = summarizer.summarizeStreaming($input.value) initiates the summarization process for the text provided in the input textarea. The $input.value represents the content to be summarized. This method returns an asynchronous iterator, which can be traversed using a for await...of loop. Each chunk yielded by the iterator represents a portion of the generated summary. These chunks are then appended to the output textarea ($output.value += chunk), allowing users to see the summary being built in real-time.

The process concludes with a "Finished." message logged, indicating that the summarization has been completed.

Illustrative Example and User Experience

The provided example demonstrates a user inputting text into the left textarea and selecting desired summarization parameters. Upon clicking "Start," the browser begins the process. If the model is not yet downloaded, users will see progress updates in the "log" area, indicating the download status. Once downloaded, the summarization begins, and the output gradually appears in the right textarea.

The accompanying image depicts a typical output. The input text, likely a lengthy article, is transformed into a concise "tl;dr" summary. Crucially, the caption highlights that this entire process, from model execution to result generation, occurs locally on the user’s device, without any reliance on external servers. This has significant implications for privacy, speed, and offline usability.

Caveats and Future Outlook for Local AI in Browsers

While the integration of local AI APIs into Chrome and Edge represents a monumental leap forward, several practical considerations and limitations are important to acknowledge.

Firstly, the initial download of the AI models themselves can be substantial, often in the gigabyte range. This initial download is a one-time event for each model, but it requires a stable internet connection and can take a considerable amount of time depending on the user’s bandwidth. Providing clear UI feedback during this download phase is paramount to a positive user experience. Developers should consider implementing mechanisms to notify users when a model is being downloaded and when it is ready for use, perhaps through progress bars or status indicators.

Management of these downloaded models is currently somewhat rudimentary. While Google Chrome offers a dedicated internal page, chrome://on-device-internals/, which lists loaded models and provides statistics, there is no direct programmatic interface within the JavaScript APIs to manage these models. Users can manually remove or inspect models through this internal page, but developers cannot currently automate these actions or query model status directly through standard web APIs. This lack of programmatic management could pose challenges for applications that require dynamic model switching or cleanup.

The inference process itself, even after the model is downloaded, may exhibit a noticeable delay between initiating the task and the appearance of the first output token. This "cold start" phenomenon is inherent in many AI model executions. Currently, the APIs do not provide granular feedback on what is happening during this initial inference period. This means developers should aim to provide at least a visual cue to the user that the process has commenced, such as a loading spinner or a message indicating that summarization is in progress, to avoid user frustration.

Despite these current limitations, the trajectory of browser-based local AI is undeniably promising. The current task-specific APIs are likely stepping stones toward more generalized AI functionalities. It is plausible that future iterations will see the emergence of more standardized APIs that abstract away the specifics of individual models and tasks, offering a more unified approach to on-device AI. This could pave the way for a rich ecosystem of AI-powered web applications that are both powerful and privacy-preserving, accessible to users regardless of their internet connectivity.

The implications of these developments are far-reaching. For businesses, it opens new avenues for enhancing user engagement and providing intelligent features directly within their web platforms without the ongoing costs and complexities of cloud-based AI infrastructure. For users, it means enhanced privacy, faster performance for AI tasks, and the ability to utilize these advanced capabilities even in offline scenarios. As these APIs mature and browser vendors continue to invest in on-device AI, the web is poised to become an even more intelligent and interactive environment.