The Perilous Cycle of AI Conversation: Why Context Anchoring is the Missing Piece in Developer Collaboration

When a complex software feature demands days of collaborative effort, developers have long relied on shared documents as a vital external memory. This isn’t about formal, exhaustive documentation, but rather a dynamic working record: detailing decisions made, the rationale behind them, rejected alternatives, and lingering questions. This practice ensures continuity, allowing team members to seamlessly pick up where their colleagues left off, regardless of absences, by offloading the burden of perfect recall. However, the advent of AI coding assistants has introduced a new, insidious challenge to this established workflow, creating a "vicious cycle" where valuable context is lost, and AI effectiveness diminishes.

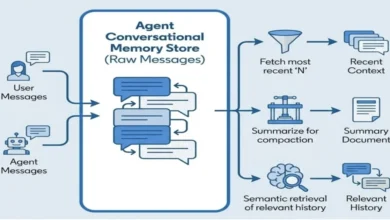

Currently, the primary record of AI-assisted development sessions often remains within the ephemeral chat history. While some AI tools are beginning to offer persistent memory features—such as Claude’s project memory, Cursor’s rules files, or Copilot’s workspace indexing—these typically operate at a broader project level. They might recall that a project utilizes Fastify, but they fail to retain the specific, feature-level reasoning that led to the rejection of a RetryQueue abstraction just yesterday. Consequently, critical constraints, design choices, and the very logic underpinning development decisions are confined solely to the chat log. This confinement forces developers to keep conversations open far longer than is productive, not out of efficiency, but out of a fear of losing the painstakingly gathered context. As these conversations stretch, they become unwieldy, and the AI’s ability to accurately recall earlier decisions deteriorates. The longer the session is maintained, the less reliable the preserved context becomes, creating a feedback loop of diminishing returns.

A revealing test for any developer engaging with an AI coding assistant is simple: "Could I close this conversation right now and start a new one without anxiety?" If this question evokes discomfort, if there’s a palpable sense of losing something crucial, it signifies that the developer’s context is trapped within a medium fundamentally ill-equipped for long-term preservation.

The Erosion of Context: Understanding the AI’s Limitations

The degradation of context within AI conversations is not random; it is a direct consequence of how large language models (LLMs) process information. Every LLM operates with a finite "context window"—a hard limit on the number of tokens it can simultaneously consider. While modern models boast windows ranging from hundreds of thousands to over a million tokens, the sheer volume of data generated during a productive development session—code snippets, design discussions, rationales, and file contents—can quickly fill this space.

Empirical research corroborates the practical experience of developers. A 2023 study by researchers from Stanford and Berkeley, titled "Lost in the Middle," demonstrated that LLMs exhibit significantly reduced performance on information positioned in the middle of long contexts, compared to data presented at the beginning or end. This effect is substantial, with recall accuracy measurably declining for content that is neither recent nor at the very outset of a conversation. This phenomenon is not exclusive to a particular model but is an inherent characteristic of the "attention mechanism" that underpins LLMs. Recent tokens and system-level instructions receive disproportionate weight, leaving information in the middle to compete for a dwindling share of the model’s focus.

While this research highlights positional degradation, practical observation reveals a more nuanced failure mode: the reasoning behind decisions erodes faster than the decisions themselves. An AI might correctly recall that a project uses PostgreSQL but fail to remember why it was chosen over MongoDB. It might forget the specific requirements that dictated this choice, such as the need for JSONB support, the team’s existing operational expertise, or multi-tenancy demands that rendered document stores unsuitable. This subtle but critical failure can lead to the AI continuing to adhere to a stated decision while simultaneously generating suggestions that contradict its underlying intent—proposing schema structures that are optimized for document stores, for example, thereby clashing with PostgreSQL’s relational strengths. The suggestion remains technically compliant with the stated choice but is architecturally misguided.

The solution mirrors the strategies developers instinctively employ to augment their own cognitive abilities: externalize what matters. Preserve it outside the transient medium of the conversation.

Some AI tools attempt to mitigate this by automatically compacting or summarizing earlier conversation history as the context window fills. However, this introduces its own set of concerns. The compaction process is largely a "black box" to the user. Developers lack visibility into what was preserved verbatim, what was summarized, and what was silently omitted. The algorithm prioritizes general coherence, not the specific nuances vital to a particular design decision. Furthermore, the detailed, explanatory, and contextual reasoning behind decisions is precisely the type of content most vulnerable to automated compression. The "what" may survive, but the crucial "why" often does not. Relying on an opaque process to preserve critical information is not a strategy but an act of hope.

This gap represents a missing piece in established AI alignment techniques. Practices like "Knowledge Priming," which involves sharing curated project context with AI, and "Design-First collaboration," which structures design conversations in sequential levels, both aim to build a shared mental model between human and AI. However, without a mechanism for persistence, this alignment is as transient as the conversation that created it, vanishing entirely when the session ends. "Context anchoring" is the practice designed to make this crucial alignment durable.

Externalizing Memory: The Feature Document as a Living Record

The core of context anchoring lies in treating decision context as external state—a living document that exists independently of the conversation. This document captures decisions as they are made, serving as the authoritative reference for both human and AI across multiple sessions.

This approach differs significantly from the "priming" documents mentioned in earlier discussions. The distinction is critical:

A priming document captures project-level context. This includes the tech stack, architectural patterns, naming conventions, and code examples. It is relatively stable, updated periodically, and shared across all features and sessions. Its purpose is to inform the AI: "Here is how this project operates."

A feature document, conversely, captures feature-level context. It details the specific decisions made during development, the constraints that shaped those decisions, what was considered and rejected, outstanding questions, and the current state of progress. This document evolves rapidly, potentially after each session. Its purpose is to inform the AI: "Here is where we are on this specific piece of work, and how we arrived at this point."

Together, these two layers form a comprehensive context strategy. Upon initiating a new session, both are loaded: the priming document provides the stable foundational knowledge, and the feature document offers the detailed history of the specific task at hand. The priming document furnishes the vocabulary, while the feature document provides the narrative.

A common objection arises: given that modern AI tools like Cursor and Copilot can directly access and read entire codebases, why is a separate document necessary? The answer lies in the fundamental difference between outcomes and reasoning. A codebase that directly implements a solution, such as using BullMQ for retry handling, offers no insight into whether a RetryQueue abstraction was initially proposed, debated, and deliberately rejected. It also fails to reveal the constraints that drove the final decision or any open questions that remain. The rejected alternative, the driving constraint, and the unresolved issues are all invisible within the code itself.

There is a practical byproduct to this approach worth noting. A concise feature document, perhaps fifty lines long, can encapsulate the decision context that thousands of lines of implementation code cannot convey. This is achieved at a fraction of the token cost. While token efficiency is not the primary driver for maintaining a feature document—reasoning preservation is—it is a compounding benefit whose significant cost implications at scale warrant further examination.

This practice directly addresses the gap identified by Michael Nygard when he proposed Architecture Decision Records (ADRs) in 2011. Code illustrates what was built, but it fails to document what was rejected, the constraints that shaped choices, the trade-offs accepted, or what remains unresolved. ADRs exist because experienced engineers recognized that the reasoning behind code is often as valuable, if not more so, than the code itself—and far more fragile. The feature document serves this same purpose for AI collaboration, acting as a living ADR that evolves in real-time as decisions are made, rather than being a retrospective documentation exercise. For teams already utilizing ADRs, the feature document is an ADR in progress, with significant decisions graduating to formal ADRs upon feature completion. For teams new to ADRs, this offers a natural entry point—a lighter-weight, more iterative, and immediately practical method for capturing essential context.

Furthermore, this approach addresses a dimension often overlooked by purely individual tools: team coordination. When multiple developers collaborate on the same feature, each potentially engaging with their own AI sessions, the feature document becomes the indispensable shared record. Developer A’s design decisions, made with AI assistance in one session, are readily accessible to Developer B’s independently initiated AI session. Without this shared document, Developer B’s AI might inadvertently re-propose abstractions that Developer A had already rejected. The shared mental model is thus extended beyond a single human-AI dyad to encompass the entire team, spanning across sessions and over time. The feature document endures what the AI’s context window cannot.

Practical Implementation: The Feature Document in Action

The notification service developed as part of the "Design-First" methodology provides a clear illustration of this concept. Following the design conversation—which involved confirming capabilities, debating components, and agreeing on contracts—a set of critical decisions emerged. These included the direct use of BullMQ for retries without a wrapper abstraction, the adoption of functional services over classes to align with codebase conventions, the decision to focus solely on email for version 1, and the selection of SendGrid for email delivery. Crucially, the reasoning behind each of these decisions was documented alongside the current constraints, open questions, and the implementation’s state of progress.

The resulting document was concise, typically under fifty lines. It eschewed formal templates in favor of a practical working record: decisions and their rationale, AI-constrained current limitations, unresolved open questions, and a straightforward checklist of completed versus outstanding tasks. This minimal structure proved sufficient to capture the essential state without devolving into unnecessary documentation.

Consider the practical benefit at the start of a third session. Instead of spending forty-five minutes reconstructing the prior conversation—reiterating the tech stack, re-establishing design decisions, and restating constraints—the developer could simply share the feature document. Within thirty seconds, the AI would possess full alignment. This is not because the AI "remembered" previous sessions, but because the decisions had been externalized into a readily digestible format. Each new session became a "warm start" rather than a "cold boot," with the shared mental model being loaded, not rebuilt.

In practice, updates to the feature document occurred at natural pause points: the conclusion of a design level, the making of a significant decision, or the resolution of an open question. Sometimes, the developer authored the update directly. Other times, the AI was prompted to summarize a decision and its reasoning, which was then edited into the document. The effort was minimal—a few lines added after each significant development—rather than a burdensome documentation exercise.

A secondary benefit, initially unanticipated, was the streamlining of the developer’s own thinking. The act of writing down why a particular choice was made—such as opting for direct BullMQ integration over a wrapper—forced clear articulation of the reasoning. This process occasionally revealed weaknesses in the initial rationale, serving as a "forcing function" for clarity in decision-making. The document was not merely external memory for the AI; it became a tool for introspection and refinement for the human developer.

Over the course of three sessions, the feature document evolved organically. New decisions were added, open questions were resolved, and the implementation state progressed. If a colleague joined the feature development, or a new AI session was initiated, this document provided immediate context, allowing them to grasp days of work in minutes, eliminating repetition and re-explanation. The document effectively carried the shared understanding forward.

Calibration: When Context Anchoring is Essential

Context anchoring is not a universal requirement. Its value is most pronounced in specific scenarios:

- Quick questions and single utilities: For brief, single-purpose interactions, the conversation is short enough that context decay is irrelevant. The overhead of maintaining a document is not justified.

- Single-session features (under an hour): For features completed within a single session, lightweight capture of key decisions and the current state may be worthwhile if there’s a possibility of revisiting the work later. A few bullet points are often sufficient to enable a restart.

- Multi-day features spanning multiple sessions: This is where full context anchoring, via a detailed feature document, becomes indispensable. The cost of lost context—measured in hours of re-explanation and potential misdirection—far outweighs the minimal effort of maintaining the document. This scenario is precisely where the "vicious cycle" of clinging to lengthy conversations is most likely to emerge, and where externalizing decisions offers the most effective break.

- Features involving multiple developers: When several developers contribute to the same feature, each utilizing independent AI sessions, the feature document acts as a crucial coordination tool. It ensures that decisions made by one developer’s AI are known to another’s, preventing redundant work or the re-introduction of previously rejected ideas.

The litmus test for effective context anchoring returns here: if closing a chat session and starting a new one can be done without anxiety, without the fear of losing irretrievable information, then the context is properly anchored. If the impulse is to keep the session alive indefinitely, that discomfort is a clear signal that decisions are residing solely within the conversation, a medium fundamentally unsuited for permanent storage.

Conclusion: From Chat-Driven to Document-Driven Development

At its core, the practice of context anchoring represents a fundamental shift from "chat-driven development" to "document-driven development." The conversation remains the dynamic medium for making decisions, but the document becomes the enduring record. Conversations, by their nature, are often disposable—they are where thinking occurs, not where conclusions are permanently stored. The document, conversely, persists.

The shared mental model between human and AI need not be transient. It can be documented, made durable, and readily shareable. When combined with preceding techniques—such as sharing curated project context before a session begins and structuring design conversations in sequential levels before any code is written—context anchoring completes a progression: static context, dynamic alignment, and persistent decisions. Each layer builds upon the last, creating a robust framework for AI-assisted development.

The simplest, most practical measure of success is straightforward: close the session. Start fresh. If this action feels effortless—if restarting costs thirty seconds of document sharing rather than thirty minutes of re-explanation—then the context is where it belongs: outside the ephemeral conversation, in a form that both human and AI can readily access, anytime. This transition from fleeting chat to persistent documentation is not merely an improvement; it is a necessity for unlocking the full potential of AI collaboration in software development.