AWS Introduces Claude Opus 4.7 on Amazon Bedrock, Elevating AI Capabilities for Enterprise Workloads

Amazon Web Services (AWS) has announced the integration of Anthropic’s latest and most advanced AI model, Claude Opus 4.7, onto its Amazon Bedrock platform. This significant development promises to enhance performance across a spectrum of critical enterprise applications, including complex coding tasks, long-running agent operations, and demanding professional workflows. The deployment of Claude Opus 4.7 on Bedrock signifies a pivotal moment in the accessibility of cutting-edge generative AI for businesses seeking to leverage artificial intelligence for productivity and innovation.

Claude Opus 4.7: A Leap Forward in AI Performance

Claude Opus 4.7, as detailed by Anthropic, represents a substantial upgrade from its predecessor, Opus 4.6. The model is engineered to exhibit superior capabilities in navigating ambiguity, executing more thorough problem-solving, and adhering to intricate instructions with greater precision. These advancements are particularly impactful for businesses relying on AI for complex decision-making, content generation, and sophisticated task automation. The model’s enhanced understanding and execution are expected to translate into more reliable and efficient AI-powered solutions.

The integration of Claude Opus 4.7 into Amazon Bedrock is underpinned by Bedrock’s next-generation inference engine. This engine is specifically designed to provide enterprise-grade infrastructure, capable of handling the demands of production workloads. Key features of this new engine include advanced scheduling and scaling logic. This logic dynamically allocates computing capacity in response to incoming requests, thereby improving the availability of AI services, especially for steady-state workloads. Simultaneously, it ensures that sufficient resources are available to accommodate rapidly scaling services, offering a robust and adaptable infrastructure.

A crucial aspect of this deployment, particularly for businesses handling sensitive information, is the commitment to privacy. Amazon Bedrock ensures "zero operator access," meaning that customer prompts and the AI’s responses are never visible to Anthropic or AWS operators. This feature is paramount for organizations in regulated industries or those dealing with proprietary data, reinforcing trust and security in the use of generative AI.

Enhancements Across Key Enterprise Workflows

Anthropic highlights that Claude Opus 4.7 demonstrates notable improvements across various workflows crucial for businesses operating in production environments. These include:

- Agentic Coding: The model’s enhanced coding capabilities are expected to accelerate software development cycles, assist in debugging, and generate more sophisticated code snippets. This could lead to faster time-to-market for new applications and features.

- Knowledge Work: For tasks involving research, summarization, and analysis of large volumes of text, Claude Opus 4.7’s improved comprehension and reasoning skills can significantly boost productivity. This is particularly beneficial for legal, financial, and research professionals.

- Visual Understanding: While not explicitly detailed in the announcement, the mention of "visual understanding" suggests potential advancements in the model’s ability to interpret and process image-based data, opening new avenues for AI applications in areas like medical imaging analysis or quality control.

- Long-Running Tasks: The ability to handle extended tasks without degradation in performance is critical for complex simulations, lengthy data processing, or continuous monitoring applications. Claude Opus 4.7’s improved stability in such scenarios provides a significant advantage.

The model’s enhanced ability to "work better through ambiguity" is a testament to its improved contextual understanding and reasoning. This allows it to provide more nuanced and accurate responses even when faced with incomplete or imprecise input. Furthermore, its more thorough problem-solving approach ensures that solutions are comprehensive and well-considered, while its precise instruction following minimizes the need for iterative refinement.

Getting Started with Claude Opus 4.7 on Amazon Bedrock

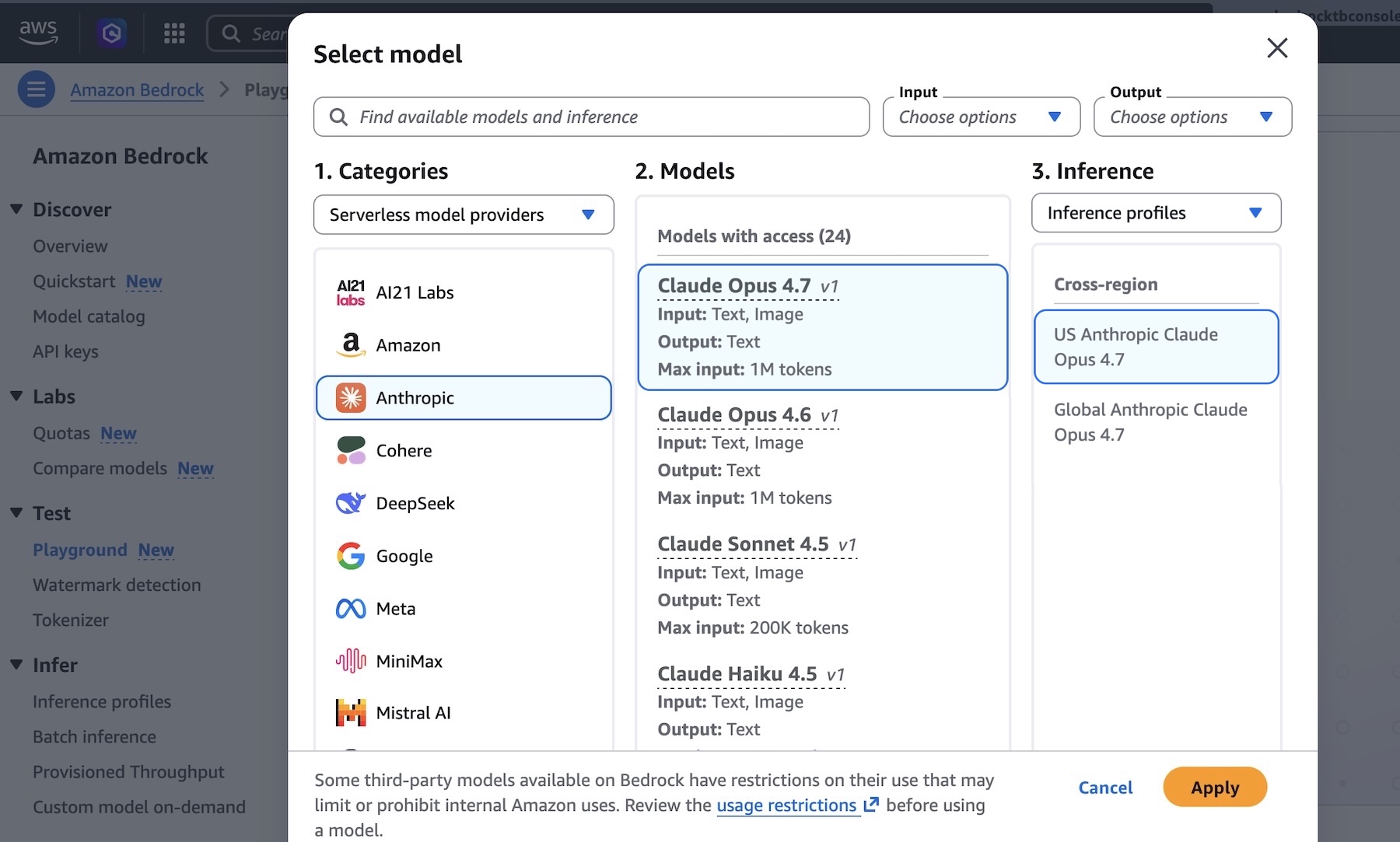

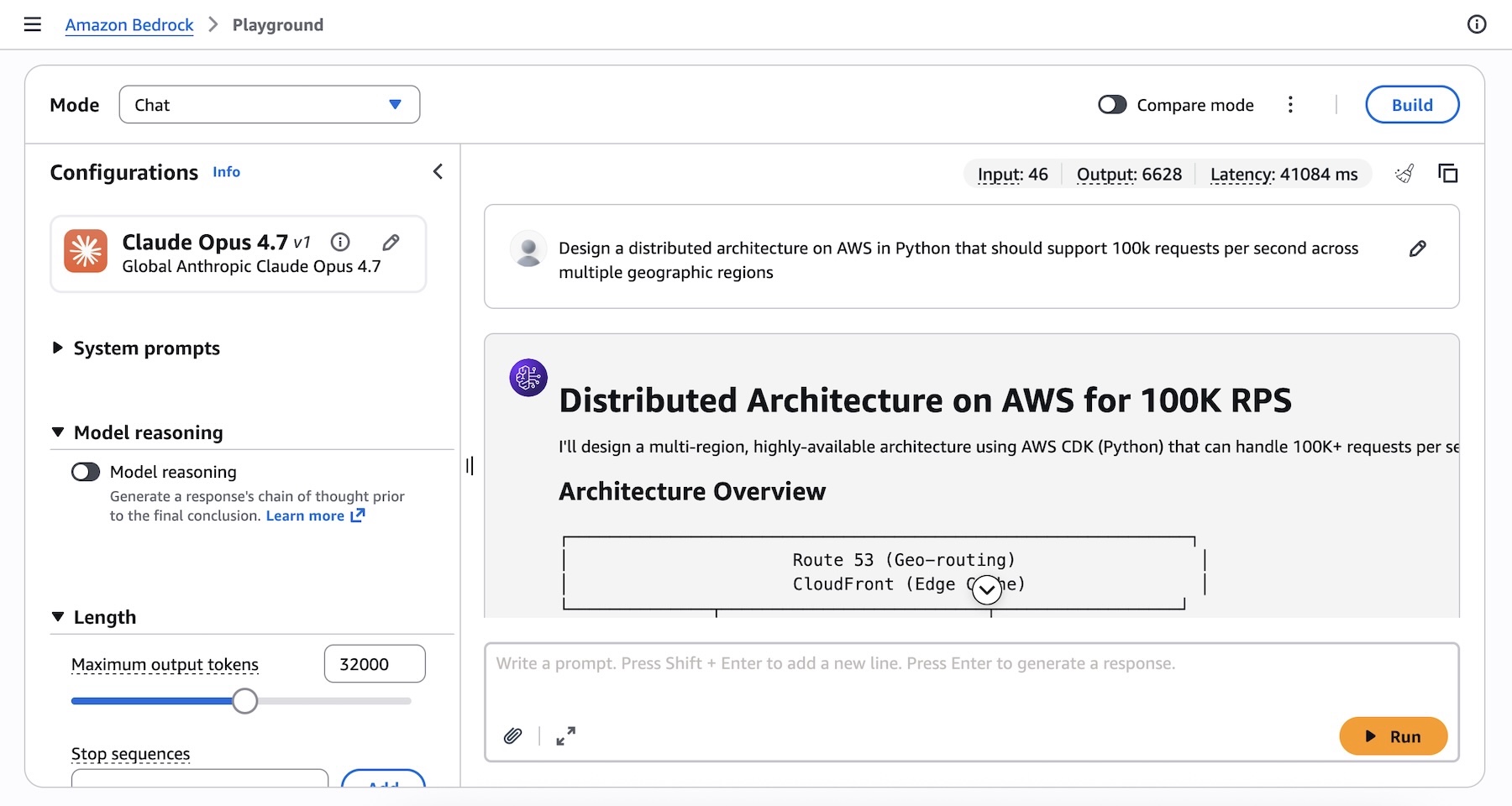

AWS is making it straightforward for developers and businesses to begin utilizing Claude Opus 4.7. The model is readily accessible through the Amazon Bedrock console. Users can navigate to the "Playground" section under the "Test" menu and select "Claude Opus 4.7" from the model options. This interactive environment allows for immediate testing of prompts and exploration of the model’s capabilities.

For instance, a user might input a complex prompt such as: "Design a distributed architecture on AWS in Python that should support 100k requests per second across multiple geographic regions." The output from Claude Opus 4.7 in response to such a prompt is expected to be detailed and architecturally sound, showcasing its advanced capabilities.

Beyond the console, Claude Opus 4.7 can be integrated programmatically into applications. Developers can access the model via the Anthropic Messages API, interacting through the bedrock-runtime client. This can be achieved using the Anthropic SDK or bedrock-mantle endpoints. Alternatively, existing methods such as the Invoke and Converse API on bedrock-runtime can be utilized through the AWS Command Line Interface (AWS CLI) and AWS SDKs.

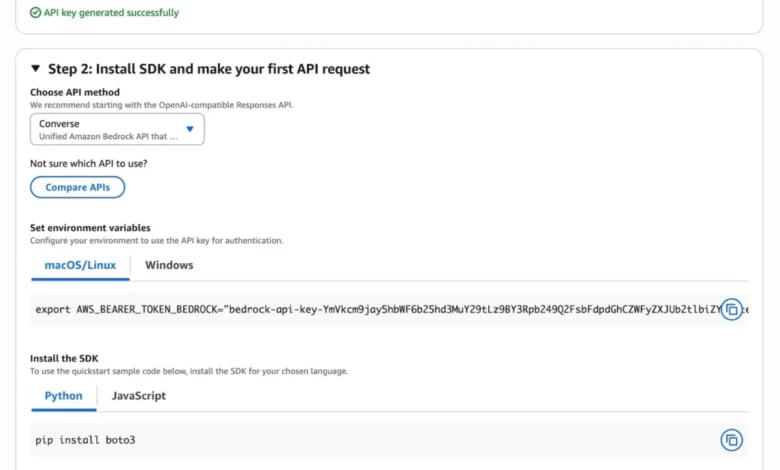

AWS also provides a "Quickstart" guide within the Bedrock console. This feature assists users in making their first API call within minutes, offering a streamlined onboarding process. After selecting a specific use case, users can generate short-term API keys for testing authentication. For users preferring an OpenAI-compatible interface, Bedrock offers sample code for generating inference requests, further simplifying integration.

Code and CLI Examples for Developers

To facilitate direct integration, AWS has provided concrete code samples:

Python SDK Example (using anthropic[bedrock]):

from anthropic import AnthropicBedrockMantle

# Initialize the Bedrock Mantle client (uses SigV4 auth automatically)

mantle_client = AnthropicBedrockMantle(aws_region="us-east-1")

# Create a message using the Messages API

message = mantle_client.messages.create(

model="us.anthropic.claude-opus-4-7",

max_tokens=32000,

messages=[

"role": "user", "content": "Design a distributed architecture on AWS in Python that should support 100k requests per second across multiple geographic regions"

]

)

print(message.content[0].text)AWS CLI Example:

aws bedrock-runtime invoke-model

--model-id us.anthropic.claude-opus-4-7

--region us-east-1

--body '"anthropic_version":"bedrock-2023-05-31", "messages": ["role": "user", "content": "Design a distributed architecture on AWS in Python that should support 100k requests per second across multiple geographic regions."], "max_tokens": 32000'

--cli-binary-format raw-in-base64-out

invoke-model-output.txtThese examples streamline the process for developers to incorporate Claude Opus 4.7 into their workflows, enabling rapid prototyping and deployment.

Advanced Features and Considerations

For advanced users seeking to extract maximum value from Claude Opus 4.7, AWS points to the "Adaptive thinking" feature. This capability allows the model to dynamically allocate its "thinking" token budget based on the complexity of each request. This intelligent resource allocation can lead to more efficient processing of complex queries and potentially reduce inference costs for simpler tasks.

Users are also advised that while Claude Opus 4.7 is a significant upgrade, some prompting adjustments and "harness tweaks" may be necessary to fully capitalize on its enhanced capabilities. Anthropic provides a comprehensive prompting guide to assist users in optimizing their interactions with the model.

Availability and Regional Deployment

The Claude Opus 4.7 model is currently available in several key AWS regions, including:

- US East (N. Virginia)

- Asia Pacific (Tokyo)

- Europe (Ireland)

- Europe (Stockholm)

AWS encourages users to consult the full list of regions for future updates on availability. The pricing for using Claude Opus 4.7 on Amazon Bedrock is detailed on the Amazon Bedrock pricing page.

Broader Implications for the AI Landscape

The introduction of Claude Opus 4.7 on Amazon Bedrock has several significant implications for the broader AI landscape. Firstly, it reinforces AWS’s strategy of providing a diverse portfolio of leading AI models through a single, managed service. This allows businesses to choose the best model for their specific needs without the complexity of managing disparate AI infrastructures.

Secondly, the continuous advancement of models like Claude Opus 4.7 underscores the rapid pace of innovation in the generative AI space. As models become more capable, the potential applications for businesses expand exponentially. This could lead to increased adoption of AI across industries, driving efficiency, enabling new business models, and fostering a more AI-integrated economy.

The emphasis on enterprise-grade infrastructure, security, and privacy on Amazon Bedrock is also crucial. As AI moves from experimental phases to core business operations, these factors become paramount for widespread adoption. The "zero operator access" policy, in particular, addresses a key concern for organizations handling sensitive data.

Finally, the availability of such advanced models through cloud platforms democratizes access to cutting-edge AI. Smaller businesses and startups can now leverage capabilities that were previously only accessible to organizations with significant AI research and development budgets. This could level the playing field and spur innovation across the entire business ecosystem.

A Commitment to Continuous Improvement

AWS and Anthropic are committed to ongoing development and refinement of their AI offerings. Users are encouraged to experiment with Claude Opus 4.7 in the Amazon Bedrock console and provide feedback. This feedback loop is vital for identifying areas for improvement, further enhancing model performance, and ensuring that the platform meets the evolving needs of its user base. Feedback can be submitted through AWS re:Post for Amazon Bedrock or via usual AWS Support channels.

The update also includes technical refinements, such as the correction of code samples and CLI commands to align with the new model version, ensuring that developers have access to accurate and functional resources. This attention to detail in developer tooling is critical for fostering a robust AI development community.

In conclusion, the integration of Claude Opus 4.7 into Amazon Bedrock marks a significant step forward in making highly capable AI models accessible and usable for enterprises. With enhanced performance, robust infrastructure, and a strong commitment to privacy, this development is poised to accelerate AI adoption and drive innovation across a multitude of business applications.