Payouts King Ransomware Evolves Tactics, Leveraging QEMU Virtual Machines to Evade Detection and Deepen Network Compromise

A sophisticated new technique is being employed by the Payouts King ransomware strain, which has recently been observed utilizing the open-source QEMU emulator to create hidden virtual machines (VMs) on compromised systems. This innovative approach allows attackers to effectively bypass robust endpoint security solutions, establishing covert remote access tunnels and facilitating the execution of malicious payloads unseen by traditional security monitoring. The evolving tactics underscore a growing trend among cybercriminal groups to exploit legitimate virtualization technologies for malicious purposes, posing a significant challenge to network defenders.

The underlying mechanism involves QEMU, a powerful and versatile CPU emulator and system virtualization tool. QEMU’s core functionality allows users to run different operating systems on a host computer as isolated virtual environments. While a legitimate tool for developers and researchers, its ability to isolate processes and its inherent stealth capabilities when configured covertly have made it an attractive, albeit illicit, tool for advanced persistent threats (APTs) and ransomware operations. Security solutions deployed on the host operating system often lack the visibility to inspect the internal workings of these emulated VMs, creating a blind spot that attackers are increasingly exploiting.

This is not the first instance of QEMU being weaponized by cyber threat actors. Over the past several years, security researchers have documented its misuse by various prominent groups. The 3AM ransomware group, for example, has been implicated in campaigns where QEMU was used to conceal malicious activity. Similarly, the LoudMiner cryptomining operation and the ‘CRON#TRAP’ phishing campaigns have also leveraged QEMU’s virtualization capabilities to maintain persistence and execute their operations discreetly. The recurrence of QEMU in diverse attack vectors highlights its utility as a versatile tool in the cybercriminal’s arsenal, adaptable to different objectives from data encryption to resource hijacking.

Recent investigations by the cybersecurity firm Sophos have brought two specific campaigns into sharp focus, both of which employed QEMU as a critical component of their attack infrastructure. These campaigns are directly linked to the Payouts King ransomware operation and demonstrate a clear strategic objective: to gain deeper access and control within target networks by evading detection.

The STAC4713 Campaign: Payouts King’s QEMU Offensive

One of the campaigns meticulously tracked by Sophos, designated STAC4713, was first identified in November 2025 and has been unequivocally linked to the Payouts King ransomware. Researchers have connected the threat actors behind STAC4713 to the GOLD ENCOUNTER threat group, an entity known for its aggressive targeting of hypervisors and its proficiency in encrypting VMware and ESXi environments. This association suggests a strategic evolution for GOLD ENCOUNTER, expanding their operational focus beyond direct hypervisor attacks to incorporating sophisticated evasion techniques for broader ransomware deployment.

In this campaign, the attackers demonstrate a methodical approach to establishing a hidden operational presence. They create a scheduled task, ominously named "TPMProfiler," designed to launch a hidden QEMU VM with SYSTEM privileges. This ensures the VM operates with the highest level of access on the compromised host, granting it significant control and the ability to perform critical system functions without raising immediate alarms. The VM’s virtual disk files are deliberately disguised to appear as legitimate database or DLL files, further contributing to their stealth.

A crucial element of this tactic is the setup of port forwarding, which enables covert access to the infected host through a reverse SSH tunnel. This tunnel acts as a secure, encrypted communication channel between the attacker’s command-and-control (C2) infrastructure and the compromised system, allowing for the exfiltration of data and the execution of further commands without direct exposure to network defenses.

The virtual machine itself is configured to run a minimal yet capable operating system: Alpine Linux version 3.22.0. This choice of distribution is common among threat actors due to its small footprint and security-focused design. Within this Alpine Linux environment, the attackers install a suite of potent tools. These include AdaptixC2, a C2 framework; Chisel, a fast TCP/UDP tunnel; BusyBox, a versatile utility for embedded systems; and Rclone, a powerful tool for managing files on cloud storage and remote servers, often used for data exfiltration.

Sophos’s analysis indicates that initial access in the STAC4713 campaign was often achieved through exposed SonicWall VPNs, a known vulnerability vector. More recent iterations of attacks attributed to this group have exploited the SolarWinds Web Help Desk vulnerability, identified as CVE-2025-26399, demonstrating an adaptive approach to exploiting prevalent vulnerabilities.

Following initial compromise, the attackers engage in post-infection activities that are critical for their data theft and ransomware deployment strategy. They leverage Volume Shadow Copy Service (VSS) utilities, specifically vssuirun.exe, to create shadow copies of critical system data. This allows them to bypass file locking mechanisms and copy essential files such as NTDS.dit (Active Directory database), SAM (Security Account Manager), and SYSTEM hives from the registry. These sensitive files, which contain valuable credential information, are then transferred to temporary directories for subsequent exfiltration.

More recent observed incidents attributed to the GOLD ENCOUNTER threat actor showcase a diversification of initial access vectors. In an attack witnessed in February, the group exploited an exposed Cisco SSL VPN. In a subsequent incident in March, they employed a social engineering tactic, impersonating IT staff on Microsoft Teams to trick employees into downloading and installing QuickAssist, a legitimate remote support tool that was subsequently abused.

Sophos further elaborated on these more recent attacks, stating, "In both instances, the threat actors used the legitimate ADNotificationManager.exe binary to sideload a Havoc C2 payload (vcruntime140_1.dll) and then leveraged Rclone to exfiltrate data to a remote SFTP location." This highlights the attackers’ proficiency in living-off-the-land techniques, using legitimate system binaries to mask their malicious activities.

The STAC3725 Campaign: Exploiting CitrixBleed 2 for QEMU Deployment

The second campaign documented by Sophos, tracked as STAC3725, has been active since February and represents another facet of Payouts King’s evolving operational tactics. This campaign specifically targets NetScaler ADC and Gateway instances by exploiting the critical CitrixBleed 2 vulnerability, identified as CVE-2025-5777. This vulnerability, which gained notoriety for its potential to lead to severe data breaches, provides attackers with a high-value entry point into organizations.

Upon successfully compromising NetScaler devices, the attackers deploy a ZIP archive. This archive contains a malicious executable that, when run, installs a service named "AppMgmt." This service is designed to create a new local administrator user account, curiously named "CtxAppVCOMService," and crucially, installs a ScreenConnect client. ScreenConnect, a legitimate remote access tool, is then leveraged by the attackers to establish persistence.

The installed ScreenConnect client connects to a remote relay server, thereby establishing a session with system privileges on the compromised network. From this privileged position, the attackers drop and extract a QEMU package. This package is configured to run a hidden Alpine Linux VM, utilizing a custom disk image denoted as custom.qcow2. Unlike the STAC4713 campaign where pre-built toolkits were observed, the attackers in STAC3725 demonstrate a higher degree of customization. They manually install and compile their attack tools directly within the QEMU VM. This includes a comprehensive suite of advanced tools such as Impacket, KrbRelayx, Coercer, BloodHound.py, NetExec, Kerbrute, and Metasploit. This hands-on approach to tool deployment suggests a desire for greater control and the ability to tailor their arsenal to the specific target environment.

Observed malicious activities within the VM in this campaign include extensive credential harvesting, Kerberos username enumeration, in-depth Active Directory reconnaissance, and the staging of data for exfiltration, often via FTP servers. This methodical reconnaissance and data staging are precursors to the actual ransomware deployment or significant data theft.

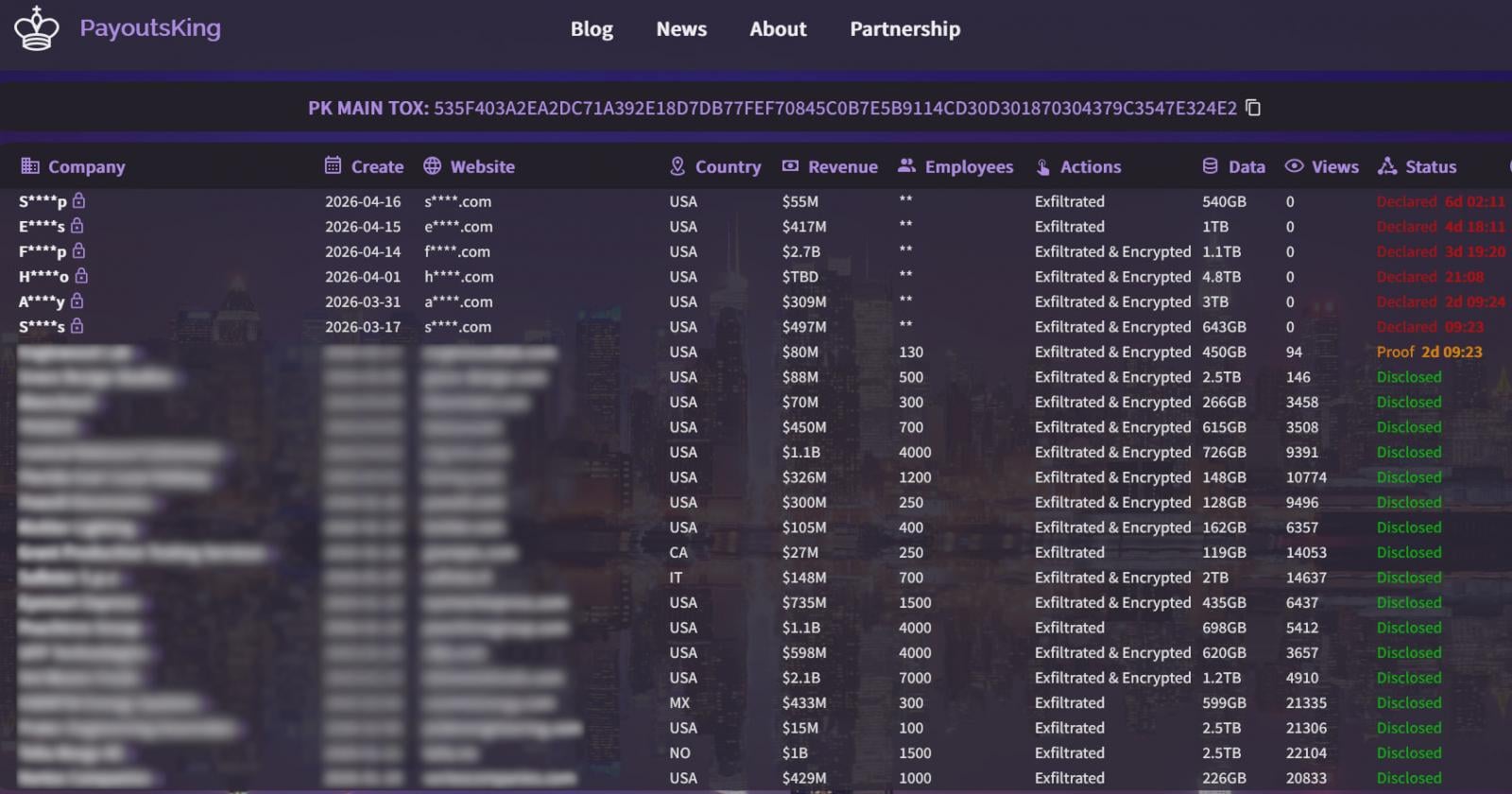

Payouts King’s Identity and Motivations

The Payouts King ransomware strain itself is believed to be closely associated with former affiliates of the BlackBasta ransomware group. This linkage is based on observed similarities in their initial access methodologies. These include the use of spam bombing to overwhelm targets, sophisticated Microsoft Teams phishing campaigns, and the abuse of the Quick Assist remote support tool – tactics previously favored by BlackBasta.

The operational characteristics of Payouts King are consistent with a well-resourced and determined threat actor. The strain employs extensive obfuscation techniques and anti-analysis mechanisms designed to thwart reverse engineering and detection. Persistence is established through the creation of scheduled tasks, a common but effective method. Furthermore, Payouts King actively attempts to terminate security tools using low-level system calls, aiming to disable defensive measures before proceeding with its encryption operations.

The encryption scheme employed by Payouts King utilizes a combination of AES-256 in Cipher-text Stealing Mode (CTR) with RSA-4096 for key exchange. The inclusion of intermittent encryption for larger files is a common optimization tactic, allowing attackers to encrypt a portion of a file, thereby reducing encryption time and bandwidth usage. The ultimate goal of this ransomware is evident from the ransom notes it drops, which invariably direct victims to leak sites on the dark web, indicating a dual extortion strategy: demanding payment for data decryption and threatening public release of exfiltrated sensitive information.

Implications and Recommendations

The widespread adoption of QEMU for malicious purposes by groups like Payouts King carries significant implications for cybersecurity. It signifies a tactical shift where attackers are moving beyond solely exploiting software vulnerabilities to actively subverting legitimate system tools for their own gain. This makes detection more challenging, as the presence of QEMU itself, or the Alpine Linux VMs it hosts, might not immediately flag as suspicious to traditional security tools.

Organizations are therefore urged to adopt a more proactive and layered security approach. Sophos’s recommendations for network defenders are crucial:

- Monitor for Unauthorized QEMU Installations: Regularly audit systems for the presence of QEMU executables or related files that are not part of authorized software deployments.

- Scrutinize Suspicious Scheduled Tasks: Pay close attention to scheduled tasks that are configured to run with SYSTEM privileges, especially those with unusual names or execution patterns.

- Detect Unusual SSH Port Forwarding: Implement network monitoring to identify unexpected or unauthorized SSH port forwarding configurations, which can be indicators of covert tunneling.

- Identify Outbound SSH Tunnels on Non-Standard Ports: While SSH typically uses port 22, attackers may use other ports for their tunnels to evade detection. Monitoring for outbound SSH traffic on atypical ports is essential.

The evolving threat landscape demands continuous adaptation from security professionals. The Payouts King ransomware’s innovative use of QEMU serves as a stark reminder that cybercriminals are constantly seeking new methods to circumvent defenses. By understanding these advanced tactics and implementing robust detection and prevention strategies, organizations can better protect themselves against sophisticated and evolving ransomware threats. The race between attackers and defenders continues, with each new evasion technique necessitating a corresponding advancement in security posture.