Pretext: Cheng Lou’s 15KB JavaScript Library Revolutionizes Web Text Layout by Eliminating DOM Reflows

A groundbreaking open-source TypeScript library, Pretext, has emerged from the digital forge of Cheng Lou, a seasoned engineer at Midjourney and a former core team member of React. This innovative 15-kilobyte tool promises to fundamentally alter how web applications handle text rendering and layout by meticulously sidestepping the often performance-crippling DOM (Document Object Model) reflow process. By calculating and positioning text entirely outside the browser’s visual rendering tree, Pretext unlocks the potential for exceptionally fluid and responsive user experiences, enabling advanced UI patterns like infinite scrolling lists, dynamic masonry layouts, and precise scroll position anchoring to operate at frame rates previously unimaginable on the web, reaching an astonishing 60 to 120 frames per second (fps). The genesis of Pretext is as fascinating as its technical achievement, born from an AI-driven loop that effectively reverse-engineered the intricate layout calculations performed by the DOM.

The Quest for Seamless User Experiences on the Modern Web

In the fiercely competitive landscape of consumer-facing applications, the end-user experience has ascended to a paramount position, serving as a critical differentiator and a primary driver of adoption. For years, web developers have strived to incorporate sophisticated UI/UX patterns that mirror the richness and interactivity of native applications. These include visually engaging masonry layouts, popularized by platforms like Pinterest and Tumblr, and the seamless virtual list scrolling that allows platforms such as X (formerly Twitter) to handle vast quantities of content without overwhelming the user’s device. Scroll position anchoring, a feature that helps maintain a consistent viewing experience during content changes, is another such advancement.

While the web’s standardization bodies have made strides in incorporating some of these advanced capabilities directly into CSS, with initiatives like CSS Grid supporting masonry layouts and the overflow-anchor property for scroll anchoring, browser support for these features remains inconsistent. This leaves many developers reliant on custom JavaScript implementations to achieve desired user experiences. However, these JavaScript-based solutions have historically been susceptible to a performance bottleneck known as "layout thrashing."

Understanding Layout Thrashing and Pretext’s Revolutionary Approach

Layout thrashing occurs when an application repeatedly queries the DOM for geometric information (like element dimensions or positions) and then modifies the DOM based on those queries. When an application needs to determine the precise height of text – a common requirement for virtualized lists containing thousands of items, complex masonry grids, or real-time AI chat interfaces – it traditionally resorts to DOM querying methods such as getBoundingClientRect() or offsetHeight. In response, the browser’s rendering engine is forced to perform a "layout reflow." This computationally expensive process necessitates recalculating the geometry and position of every element on the page, potentially affecting the entire document. On pages laden with content or operating under strict rendering budgets, these frequent reflows can lead to jarring frame drops, sluggish animations, and an overall degraded user experience.

Pretext offers a radical departure from this paradigm by entirely circumventing the need for DOM reflows. The library operates in two distinct, highly efficient phases. The first, prepare(), leverages the Canvas API’s measureText() function. This allows Pretext to meticulously analyze text and determine the exact pixel width of each segment, taking into account crucial factors such as font family, letter spacing, word spacing, and Unicode rules. Crucially, this measurement is performed independently of the DOM. The results of these calculations are then cached, meaning the initial cost of the prepare() phase is paid only once.

The second phase, layout(), then takes center stage. This phase utilizes pure arithmetic, operating on the pre-calculated and cached text widths. By applying simple mathematical operations based on the available container width, layout() efficiently derives the total line count and the final computed height of the text. This layout() function represents the "hot path" of the library, designed to be called as frequently as necessary to accurately reflect changes in the container’s dimensions without ever interacting with the DOM. Consequently, neither phase incurs the performance penalty associated with expensive DOM reflows.

Performance Metrics and Community Validation

The impact of Pretext’s innovative approach is demonstrably significant. Multiple performance tests, shared by the development community through various channels, highlight its remarkable speed. For instance, a single layout operation involving 500 blocks of text can be completed by Pretext in approximately 0.09 milliseconds. This translates to a performance improvement of up to 600 times compared to traditional DOM-based methods. Such dramatic gains empower applications to consistently maintain 120 frames per second, even when users are dynamically manipulating elements that require text to wrap and reflow in real-time. This level of performance is critical for applications demanding ultra-smooth animations and instantaneous visual feedback.

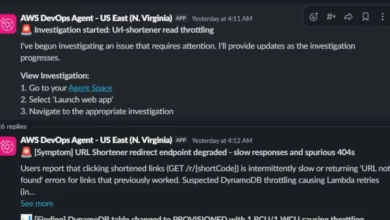

The AI Enabler: A Unique Development Story

The development of Pretext is not only a technical triumph but also a testament to the burgeoning capabilities of artificial intelligence. Cheng Lou revealed that AI played a pivotal role in the library’s sophisticated multi-language implementation. He explained that the core engine, remarkably small at just a few kilobytes, was meticulously engineered to be aware of browser quirks and to support a vast array of languages, including complex scenarios like Korean text interspersed with right-to-left Arabic and platform-specific emojis.

This multilingual prowess was achieved through a novel development process. Lou fed large language models like Claude and Codex with the browser’s "ground truth" – its actual layout calculations. The AI models were then tasked with measuring and iterating against these results across every significant container width, a process that spanned several weeks. This method, where working software acts as an oracle for AI verification and refinement, showcases the potent potential of autonomous AI loops. This approach bears resemblance to recent advancements, such as the development of a C compiler by sixteen Claude agents that achieved 99% compatibility with gcc tests in just two weeks with minimal human oversight.

Industry Reaction and Emerging Use Cases

The release of Pretext has been met with an overwhelmingly positive reception from the developer community. The project’s GitHub repository experienced an explosive surge, garnering an impressive 16,000 stars within the first 24 hours of its announcement. Developers have already begun exploring and implementing Pretext in interfaces that were previously considered too performance-intensive for the web.

Simon Willison, a prominent voice in the technology community, highlighted the library’s capabilities on his blog, detailing its potential to replicate professional-grade print typography on the web. This opens up exciting possibilities for designers who have long faced limitations in achieving the same typographic fidelity online as they can in print.

One designer, speaking on the broader implications, articulated the long-standing gap between print and web design capabilities, largely attributed to text rendering limitations. "There’s always been a gap between what print designers do and what web designers are allowed to do. It mostly comes down to text," they noted. The designer expressed an intense fascination with Pretext’s demonstrations, curating a collection of their favorite examples, and emphasized that for graphic designers, Pretext signifies the ability to work with text on the web with the same creative freedom enjoyed in print.

A Glimpse into Pretext’s Capabilities

Developers eager to explore Pretext can access a wealth of resources, including numerous interactive demos showcasing its diverse use cases. These include a variable-height virtual scroll implementation, dynamic AI chat bubbles that adapt fluidly, and a multilingual content feed designed to handle diverse linguistic requirements. For those with a keen interest in the mathematical underpinnings of typography, Pretext offers an interactive comparison of justification algorithms. This feature pits Pretext’s capabilities against the web’s standard justification methods and the renowned Knuth-Plass algorithm, the cornerstone of the TeX/LaTeX typesetting system.

The implications of Pretext extend beyond mere performance enhancements. By democratizing advanced text layout capabilities, it empowers developers to create more engaging, sophisticated, and visually rich web experiences. The library’s compact size, efficient architecture, and AI-assisted development suggest a new era of web performance optimization, where complex UI patterns are no longer a barrier to entry but rather a standard feature of modern web applications. The ability to render text with such precision and speed also has the potential to bridge the gap between digital and print design, offering designers unprecedented creative control over the web’s most fundamental element: text. As Pretext continues to mature and gain broader adoption, its impact on the future of web design and user interface development is poised to be profound and far-reaching.