Event-Driven Architectures in Regulated Industries: Foundations, Benefits, and Challenges

Chris Tacey-Green, speaking at a recent industry event, delved into the intricacies of implementing event-driven architectures (EDAs) within highly regulated sectors such as banking and aviation. His presentation, "Event-Driven, Cloud Native, Banking," aimed to demystify EDAs for those new to the concept while offering valuable insights for experienced practitioners, particularly concerning their application in environments demanding stringent compliance and robust reliability.

Understanding the Core Components of Event-Driven Architectures

Tacey-Green began by dissecting the fundamental elements of an event-driven architecture. At its heart, an "event" is defined as a change in the state of a system. This change can be triggered by various sources, including user actions, asynchronous background processes, or external systems. Events can either carry data, referred to as "fat events," or serve as simple notifications, termed "thin events." Tacey-Green advocated for "lean" events, suggesting that events should carry all pertinent data without extraneous information, drawing a parallel to a well-known paper advocating for "putting your events on a diet."

A crucial distinction was drawn between "events" and "commands." Tacey-Green emphasized that while commands represent a direct instruction to perform an action, often with an expectation of a result, events are declarations that something has already happened. "An event is me shouting into the world saying that something happened," he explained. "I’m not expecting anything to happen off the back of that. I’m not necessarily expecting anyone to be listening to me." This clear differentiation, he noted, is vital for avoiding common pitfalls that can undermine the benefits of an EDA.

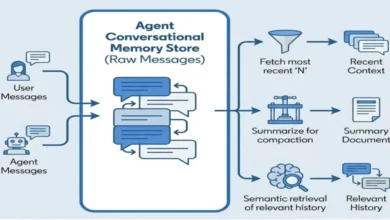

An event-driven architecture, therefore, is an ecosystem where multiple systems react to these emitted events. These systems typically comprise "producers," which publish events, and "consumers," which subscribe to and process them.

Tacey-Green also clarified the relationship between EDAs and "event sourcing." While often discussed in the same context, they are not interchangeable. Event sourcing is a specific pattern for representing the state of an application as an immutable sequence of events. For instance, in a shopping cart scenario, instead of storing the state as "4 hats," event sourcing would record four individual events: "add hat," "add hat," "add hat," "add hat." While event sourcing can complement EDAs, it is not a prerequisite. He cautioned that event sourcing can be a complex pattern to implement, and teams should understand that an EDA can be achieved without adopting it.

The "cloud-native" aspect of the title refers to the design, construction, and operation of workloads in the cloud using modern engineering practices, emphasizing scalability and DevOps principles like CI/CD. Finally, "banking," as Tacey-Green described it, involves large, traditionally slow-moving, and highly regulated organizations. He contrasted the cautious nature of many traditional banks with the modern, agile approach adopted by his employer, Investec, which is actively embracing these advanced architectural patterns.

The Compelling Rationale for Eventing in Regulated Environments

The presentation then transitioned to the "why" behind adopting EDAs, especially in high-stakes environments like banking. Tacey-Green highlighted several key benefits derived from real-world use cases:

Decoupling for Enhanced Agility and Reliability

A primary advantage is decoupling, which significantly enhances system independence and resilience. Tacey-Green illustrated this with the example of transaction monitoring at a bank. Traditionally, integrating a payment system with a transaction monitoring service could lead to tight coupling. This could involve payments directly calling a transaction monitoring API or vice-versa. Such coupling creates dependencies that can impact reliability and slow down development, especially when systems have different reliability expectations or operational requirements.

By adopting an EDA, payments systems can publish events like "payment initiated" or "payment processed" without any knowledge of transaction monitoring. Transaction monitoring, as an independent consumer, can subscribe to these events, extract the necessary data, and perform its analysis. This decoupling means that if the transaction monitoring system experiences downtime, it does not affect the core payment processing flow. Conversely, the payment system can evolve without directly impacting the transaction monitoring service. This separation is critical in regulated industries where core functions like payments must remain highly available, while auxiliary services may have different uptime SLAs.

An Immutable Activity Log for Enhanced Auditability

EDAs naturally create an immutable log of all system activities, which is invaluable for auditability and traceability in regulated sectors. Before implementing an EDA for payments, Tacey-Green explained, understanding the precise journey of a payment through various checkpoints (fraud checks, sanctions screening, gateway selection) was challenging. With an EDA, each step generates a distinct event, forming a comprehensive and trusted record. This is not a separate audit log but the very record of how the system operated. Business-oriented event names, like "payment initiated" or "fraud check completed," provide clear visibility into the payment lifecycle, aiding in investigations and compliance reporting.

Fan-Out Capabilities for Efficient Workflow Management

The "fan-out" pattern, where a single event triggers multiple independent downstream actions, is another significant benefit. Consider a payment processing event. This single event can simultaneously trigger updates to customer payment limits and initiate customer communications (e.g., push notifications, SMS, email). In a traditional, tightly coupled system, coordinating these actions and handling failures in one could be complex. With an EDA, the "payment processed" event can be published once, and independent services for payment limit updates and customer communications can consume it. This allows each service to manage its own fault tolerance and retries without impacting the others, promoting greater system robustness.

Robust Fault Tolerance for Critical Operations

In regulated industries, fault tolerance is not a luxury but a necessity. EDAs offer multiple layers of fault tolerance. Tacey-Green outlined three tiers:

- Transient Retries: Similar to in-process retries (e.g., using libraries like Polly in .NET), these handle temporary network glitches or service unavailability by retrying operations with a configurable delay and jitter. In an asynchronous EDA, these retries can potentially be extended.

- Eventing Technology Retries/Back-off: If transient retries fail, the underlying eventing infrastructure (e.g., Kafka, Kinesis, Azure Event Hubs) can be configured for more extended retries with a back-off strategy.

- Dead-Lettering and Human Intervention: For persistent issues or "poisonous messages" (events that break contracts or contain unprocessable data), a dead-letter queue is essential. This mechanism diverts problematic events, preventing them from endlessly retrying and corrupting the system. It also allows for human intervention to investigate and replay these events if deemed necessary, ensuring critical operations are eventually completed.

Plug-and-Play Capability for New Features

As EDAs mature, they enable a "plug-and-play" approach for introducing new capabilities. If core systems like payments or accounts publish well-defined domain events, new services, such as a rewards program, can be built by simply subscribing to these existing events. This eliminates the need for direct integrations with the core systems, accelerating development and reducing dependencies. For example, a new rewards service could automatically know when a client is onboarded, an account is created, or a payment is processed, simply by consuming the relevant events.

Navigating the Challenges: What Hurts and What Helps

While the benefits are substantial, Tacey-Green candidly addressed the inherent challenges of implementing EDAs, particularly in a regulated context.

Human and Organizational Hurdles

The most significant challenge, he noted, is not technological but human. "Event-driven architectures are hard for people, mainly people who have not yet worked on those architectures before," he stated. This learning curve can impact the speed of delivery, with new team members potentially taking months to reach parity with experienced engineers. The shift in paradigm requires architects and developers to think differently, moving from imperative to declarative programming models and embracing concepts like eventual consistency.

What helps:

- Developer Platforms and Paved Roads: Establishing a robust developer platform with pre-built service templates and application modules can significantly ease the adoption of EDAs. These "paved roads" provide developers with standardized, well-architected starting points, abstracting away much of the complexity.

- Comprehensive Training and Enablement: Investing in dedicated training programs is crucial. Tacey-Green recounted an effective approach where an enablement team worked intensively with a delivery team for a week, combining theoretical training with hands-on design and development of a small, production-ready EDA system. This immersive approach fosters confidence and practical understanding.

- Aligning on Standards and Principles: Early standardization of event contracts, permission models, and technology choices across the organization is vital. This ensures consistency and interoperability, preventing teams from encountering vastly different eventing paradigms when consuming events from other domains.

The Perils of Duplicated or Lost Events

In regulated industries like banking and aviation, the loss or duplication of events can have severe financial and compliance consequences. For example, a lost payment event could mean a landlord never receives rent, while a duplicated payment could lead to customer disputes and financial penalties.

What helps:

- Inbox and Outbox Patterns: Tacey-Green strongly advocated for the implementation of the outbox pattern for producers and the inbox pattern for consumers.

- Outbox Pattern: This pattern ensures that when an event is published, it is saved to a dedicated "outbox" table within the same transactional boundary as the data modification. A separate dispatcher then publishes the event to the event stream. This prevents event loss if the publishing mechanism fails after the data is saved.

- Inbox Pattern: On the consumer side, the inbox pattern ensures that events are not processed multiple times. Incoming events are first recorded in an inbox table. The business logic then processes events based on their unique identifiers from this inbox. This protects against duplicate events delivered by eventing technologies (which often guarantee "at-least-once" delivery).

- Developer Platform Integration: Building these patterns directly into the developer platform ensures that all teams benefit from this crucial protection without needing to reimplement complex logic themselves.

The Challenge of Breaking Event Contracts

Events form a contract between producers and consumers. Once an event is published to an immutable event stream, it cannot be easily altered. Breaking these contracts by changing event structures or data can lead to cascading failures in consumer systems.

What helps:

- Treating Events as API Contracts: Tacey-Green advised treating event contracts with the same care as API contracts. This involves designing events thoughtfully and avoiding breaking changes whenever possible.

- Versioning Events: When breaking changes are unavoidable, versioning events, similar to API versioning (e.g.,

v1,v2), is essential. Adata_versionproperty within the event metadata allows consumers to handle different versions gracefully. - Separating Domain and Integration Events: Differentiating between internal "domain events" (specific to a single bounded context) and external "integration events" (used to communicate between domains) is critical. This prevents the accidental exposure and contractual commitment of internal domain concepts that might need to change later. Integration events should be carefully curated and transformed from domain events before being published externally.

Event Ordering and Its Implications

Cloud-native eventing technologies are often optimized for scale and may not guarantee event order. In scenarios where order is critical (e.g., processing a series of transactions for a single account), this can lead to issues like allowing duplicate high-value payments if the balance update event hasn’t been processed yet.

What helps:

- Explicit Ordering with Versioning: Events can be stamped with sequence numbers or version identifiers. Consumers can then enforce order by only processing events in the expected sequence, backing off if an earlier event in the sequence has not yet arrived. This approach, while effective, can impact scalability.

- Implicit Ordering through Domain Logic: Alternatively, domain logic can implicitly enforce ordering. For instance, a system might be designed to only process a payment event if a "beneficiary created" event has already been processed. This leverages inherent domain rules to manage order without explicit event stamps, often proving more scalable.

A Holistic View: The Payments and Communications Example

Tacey-Green concluded by illustrating these concepts with a detailed diagram depicting the flow of events between "payments" and "communications" domains within a banking context. The diagram showcased the journey of a payment from an API call, through the outbox pattern for event publishing, into the event stream, and then consumed by the communications domain via an inbox pattern. It highlighted the transformation of domain events into integration events, the role of publishers in filtering, aggregating, and transforming events, and the overall protection mechanisms implemented to ensure reliability and compliance. This visual representation underscored how these architectural patterns, when integrated into a developer platform, can empower teams to build robust and scalable EDAs in regulated environments.

The discussion also touched upon key questions from the audience, including defining event versions, proving completeness of event streams for auditors (SOX compliance), managing the cost of deduplication, handling a large number of integration events, and the trade-offs between lean and fat events. Tacey-Green’s responses emphasized practical solutions and the importance of careful design and trade-off analysis.

Ultimately, Tacey-Green’s presentation offered a comprehensive guide to navigating the complexities of event-driven architectures in highly regulated industries, underscoring that with careful planning, robust tooling, and a focus on human factors, these powerful architectural patterns can be successfully implemented to drive innovation and efficiency.