OLT-1 Achieves Autonomous Self-Evolution Through Scientific Method-Based Improvement Loop

Fallen Angel Systems (FAS) has unveiled a significant advancement in artificial intelligence development with OLT-1, an AI model that employs an autonomous evolution system mirroring the scientific method. This groundbreaking approach allows OLT-1 to diagnose its own failures, hypothesize solutions, test them rigorously in a controlled sandbox environment, and only promote functional improvements, all without direct human intervention in the core improvement cycle. Human oversight is reserved for the final promotion of stable advancements. This marks a departure from traditional AI development, which typically relies on slow, expensive, and error-prone human-driven retraining cycles.

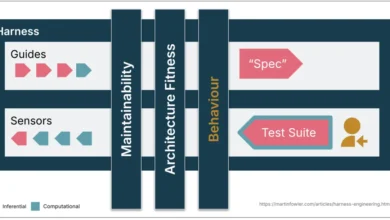

The core of OLT-1’s self-improvement lies in its meticulously designed five-step evolution loop: Diagnose, Hypothesize, Sandbox, Compare, and Promote or Reject. This cycle is currently executed across a comprehensive test suite comprising 407 distinct tests per evolution cycle.

The Evolution Loop: A Closer Examination

The Diagnosis phase involves running the entire suite of tests to pinpoint every failure mode. These failures are then meticulously categorized based on their origin. Is the issue rooted in the encoder’s inability to detect specific concepts, the reasoning circuits producing erroneous outcomes, or the decoder generating incoherent text? This granular analysis is crucial for targeted interventions.

Following diagnosis, the Hypothesize step comes into play. Leveraging the dominant failure source and historical intervention data, OLT-1 proposes potential fixes. The available options are diverse and strategically chosen: INCREASE_EPOCHS (extending training on existing data), ENCODER_RETRAIN (retraining the encoder on identified weak concepts), REASONING_RETRAIN (directly addressing issues within the reasoning circuits), COMBINED (a more holistic approach involving encoder and decoder retraining with knowledge replay), or TARGETED_DATA (decoder-specific training pairs).

The Sandbox phase is where proposed fixes are rigorously tested. The target component of OLT-1 is forked, and it undergoes training using relevant data, incorporating spaced repetition techniques. Crucially, older data examples are interleaved to prevent catastrophic forgetting – a common pitfall in AI model development. The performance of this sandboxed version is then re-evaluated against the same comprehensive test suite.

In the Compare step, the system assesses the delta in the pass rate of the sandboxed model against its predecessor. However, this comparison goes beyond mere performance metrics. A critical element is the evaluation of retention. An intervention that boosts performance in one domain while degrading capabilities in another is automatically flagged for rejection. This ensures that improvements are holistic and do not come at the cost of existing functionality.

Finally, the Promote or Reject decision is made. If the sandboxed model demonstrates a net improvement without any unacceptable regressions in other areas, its updated weights are promoted to replace the production version. Conversely, if the intervention proves detrimental or fails to yield significant improvements, it is discarded, and the loop iterates to find a more effective solution.

Uncovering Hidden Flaws: When Evolution Outpaced Human Observation

A pivotal moment that underscored the power of OLT-1’s self-evolutionary system occurred in April. Following a 1500-round overnight training session, the observed performance gains were unexpectedly modest, registering only a minor increase in understanding. This anomaly prompted a deeper investigation, as human observers noted that the trendline for model improvement appeared "too flat," suggesting a subtler issue than immediately apparent.

To dissect the problem, the training session was broken down into five sequential 100-round batches. This granular view revealed a startling pattern: Batch 4 showed a significant spike in performance, reaching 14.3% good outputs, only for Batch 5 to abruptly decline back to 10%. More alarmingly, classification accuracy plummeted from 67% to 0%, and quantity understanding dropped from 25% to 0% – all occurring between training cycles.

This per-batch cliffhanger exposed two compounding bugs that had remained silent and invisible to standard diagnostic tools. There were no error traces, no failing tests reported by the existing suite, and aggregate metrics offered no clues. It was the meticulous per-batch analysis, driven by human suspicion, that made these insidious issues discoverable.

Bug 1: Spaced-Repetition Replay’s Silent Erasure of Compound Concepts

The first discovered bug pertained to the spaced-repetition sampling mechanism used in the evolution’s replay process. This mechanism was designed to reconstruct concept dictionaries from response text by performing whitespace word-matching. However, this approach inadvertently dropped 36 concepts whose names did not appear literally within their own responses. These included crucial concepts such as type_of, example_of, not_equal, too_much, too_little, refusal, self_knowledge, affirmation, meta_awareness, preference, capability, all three primary emotions, all four physics outcomes, time markers, colors, and conversation bundles.

This meant that approximately 36 concepts were being silently evaporated from the replay data in every cycle. The very mechanism intended to prevent forgetting was, in effect, causing it by being blind to the nuanced ways concepts were represented. The model was losing knowledge precisely because its retention mechanism was flawed.

The fix involved a fundamental shift in how concept data was handled. Instead of attempting to reconstruct concepts from text, OLT-1 was reprogrammed to decode the stored key_vector (float32 bytes representing concept activations) directly. This ensured that all 311 concepts were preserved during replay. Empirical verification demonstrated a significant increase in usable entries, from 13,661 to 20,012, and a comprehensive coverage of all 311 concepts, up from 275.

Bug 2: The Grader’s Blind Spot: 73% of Vocabulary Unseen

The second critical bug revealed a severe deficiency in the evaluation suite itself. The existing 79-test decoder suite covered only 83 out of the 311 total concepts, representing a mere 27% coverage. This meant that OLT-1’s evolution system could silently trade untested concepts for tested ones and still receive promotion. This is precisely what occurred in Batch 5, where an intervention scored a seemingly positive +0.065 improvement and was promoted, despite entirely destroying classification capabilities.

The core issue was not that the model was failing, but that the grader was fundamentally blind to the extent of the failure. A promotion could occur based on a narrow set of measured improvements, while significant degradation in unmeasured areas went unnoticed.

Fortifying Against Future Failures: Three Layers of Defense

To prevent such "class-of-failure" bugs from recurring, FAS implemented three robust defense layers:

- Layer 1: The Siren. The

test_suite.pyscript was enhanced to check concept coverage at every evolution engine initialization. If any vocabulary concept has zero associated tests, an alarm is immediately triggered, ensuring that new concepts are not introduced without adequate testing. - Layer 2: The Generators. A sophisticated system of per-category template functions, coupled with over 100 per-concept overrides, was developed to auto-generate 228 "floor-coverage" tests. This ensures that every vocabulary concept now has at least one associated test, eliminating blind spots.

- Layer 3: The Retention Check. This layer samples real

(key_vector, response_text)pairs directly from the decoder bank. It then synthesizes prompts from active concepts and utilizes meaningful words from stored responses as expected keywords for verification. This process generates 100 retention tests per cycle, which automatically scale with the growth of the model’s memory storage (referred to as the hippocampus).

The combined test suite now comprises 79 hand-written tests, 228 auto-generated tests, and 100 retention tests, totaling 407 tests per cycle. This has dramatically increased grader coverage from 27% to a comprehensive 100%.

The Verification Run: A Return to Stability

Following the implementation of these fixes, the same 5-batch confirmation test was rerun. The results demonstrated a complete recovery and stabilization of performance, with no recurrence of the silent degradations observed previously. The key victory, however, lies not just in the numerical improvement but in the closure of the failure mode itself. Both silent forgetting during replay and blind-spot promotions, previously significant vulnerabilities, are now actively guarded against by the implemented "sirens."

Dream Consolidation: Learning During Dormancy

Beyond the active evolution loop, OLT-1 also employs a "dream consolidation" process, a mechanism that mirrors biological sleep for memory consolidation. This process involves three tiers of dream cycles:

- Reinforcement of Important Patterns: Frequently accessed or critical patterns are reinforced, strengthening their representation within the model.

- Flagging Weak Areas: Areas of the model exhibiting lower confidence or less robust performance are identified for potential future re-training or targeted intervention.

- Hippocampal Maintenance: This ongoing process ensures the active maintenance and integrity of the model’s memory stores, analogous to how the hippocampus in biological brains functions to preserve and organize knowledge.

This "dreaming" phase allows OLT-1 to process and consolidate learned information, much like humans consolidate memories during sleep, thereby improving long-term retention and overall model stability.

The Teacher Loop: Fueling the Evolution Engine

The evolution system’s need for training data is met by the "teacher loop," a process previously detailed. In essence, an external model generates conversations tailored to OLT-1’s current conceptual understanding. OLT-1 then responds, and a teacher model evaluates these responses. Corrections and feedback from the teacher are channeled into OLT-1’s training data and its hippocampus. Importantly, the teacher model itself evolves in tandem with OLT-1, updating its categories, evaluation criteria, and correction examples as OLT-1’s capabilities expand.

Append-Only Growth: Preserving Knowledge Incrementally

A fundamental principle guiding OLT-1’s development is "append-only growth." This philosophy emerged from hard-learned lessons. Early attempts at full decoder curriculum retraining on extensive datasets, even with significant data replay, resulted in catastrophic forgetting, where pass rates plummeted significantly. For example, a 45.6% pass rate dropped to 31.6% after such a retraining attempt, necessitating a restoration from backup.

The current, strictly incremental approach ensures that all additions are additive. Every piece of knowledge is preserved, and no concept, once learned, can be lost unless the entire memory system is deleted. This incremental growth strategy is critical for building robust and reliable AI systems that do not suffer from the fragility of older retraining paradigms.

The Significance of Self-Evolution in AI Security

At Fallen Angel Systems, a recurring pattern observed in AI security is the reactive nature of human-driven patch processes. Models are deployed, attacks emerge, and human intervention is required to identify and fix vulnerabilities. This response time is typically measured in days or weeks, a period that can be critical in the context of rapidly evolving cyber threats.

OLT-1’s autonomous evolution system presents a paradigm shift. It envisions AI systems that can proactively conduct their own diagnostics, identify weaknesses, propose and test fixes, and only implement improvements that demonstrably enhance capabilities without compromising existing functionality. This self-improvement loop operates on a timescale of minutes, not weeks, offering a dramatically accelerated response to emergent issues.

This is not to be confused with uncontrolled or "dangerous" autonomous AI. Human review remains a critical gatekeeper for all promotions. However, it represents a significant leap in "useful" autonomy – the system’s ability to catch its own bugs and propose solutions far faster than human teams can, all while mitigating the risk of regressions through rigorous sandbox testing.

The implications for AI security are profound. Imagine a system like FAS Guardian, designed to defend production AI systems, endowed with this self-evolutionary capability. Instead of relying solely on human analysts to detect new attack patterns, such a system could autonomously generate candidate detection rules, rigorously test them against its comprehensive regression suite in a sandbox, and promote only those that prove effective without degrading existing defenses. This points towards a future of AI systems that are not only more capable but also inherently more resilient and self-sufficient in their maintenance and improvement.

Future Trajectory and Open Questions

OLT-1 is currently progressing through Stage 9, with subsequent stages focusing on incorporating conditional reasoning, sequences, arithmetic, code concepts, science, and language quality. The underlying architecture is designed to support these advancements, and the evolution system is poised to drive their improvement as they are integrated.

Several key questions remain at the forefront of FAS’s research agenda, echoing those raised in previous technical discussions: Will this architecture scale effectively to billions of parameters? Can architectural consent, the principle of components working harmoniously, be maintained at such scales? Can self-evolution keep pace with sophisticated adversarial pressures in production environments? And critically, can the evaluation methodologies for developmental AI evolve rapidly enough to accurately measure the capabilities they are designed to assess?

Fallen Angel Systems is actively pursuing answers to these questions. For individuals or organizations interested in contributing to this frontier of AI development, FAS is actively seeking collaborators.

(Note: This narrative arc continues the observation that human intuition, exemplified by Josh’s "feeling" about the numbers, plays a crucial role in triggering deeper investigations that reveal fundamental bugs, even within advanced autonomous systems. This is the second instance of Josh’s observations leading to critical bug discoveries.)

Origin is developed at Fallen Angel Systems utilizing the Genesis framework (USPTO Application #64/017,567). FAS Guardian provides sub-3ms prompt injection defense for production AI systems. FAS Judgement is an open-source attack console designed to identify vulnerabilities. The company’s focus spans Defense, Offense, and Creation in the AI landscape.

For more information, visit fallenangelsystems.com or explore Judgement on GitHub. For consulting inquiries or further discussion, contact [email protected].