caching

-

Artificial Intelligence

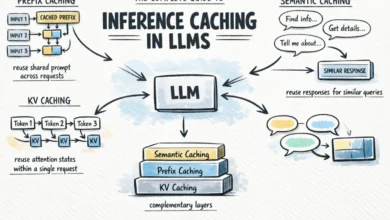

The Complete Guide to Inference Caching in Large Language Models

As the deployment of large language models (LLMs) transitions from experimental research to enterprise-scale production, the industry has encountered a…

Read More »