Microsoft 38TB AI Data Leak Details A Deep Dive

Microsoft 38TB AI data leak details paint a concerning picture of potential vulnerabilities in the tech giant’s systems. This massive leak raises critical questions about data security in the AI industry, and its potential impact on users, businesses, and researchers alike. The sheer scale of the leak, along with the unknown types of data compromised, makes this a significant event demanding careful consideration.

What exactly was leaked? How vulnerable are other AI systems? Let’s explore the potential consequences and responses to this data breach.

The leak, reported to involve 38 terabytes of data, potentially includes sensitive training data, user information, and research papers. Understanding the specifics of this incident is crucial to comprehending the broader implications of AI data handling. This article will delve into the technical, legal, and societal impacts of this substantial leak. We will also examine industry best practices to prevent similar breaches in the future.

Overview of the Data Leak

The recent alleged leak of 38TB of Microsoft AI data has sparked considerable concern across various sectors. While the specifics surrounding the leak remain unclear, the sheer volume of data involved underscores the potential for significant ramifications, particularly concerning privacy and security. The potential implications for researchers, businesses, and the general public warrant careful consideration.

Summary of the Alleged Leak

The reported leak involves a massive dataset of 38TB, allegedly containing data used to train Microsoft’s AI systems. The exact nature of this data is not publicly known, but it’s reasonable to assume it encompasses a wide range of information, potentially including user interactions, application data, and internal company records. The scale of the leak is unprecedented, raising concerns about the potential for misuse and exploitation.

Scale and Nature of the Leaked Data

The volume of 38TB is exceptionally large, comparable to storing thousands of high-resolution images or millions of documents. The precise contents of the data remain undisclosed, but it’s likely to include a mix of structured and unstructured information, potentially including sensitive personal data, proprietary business information, and research findings. The unknown nature of the data further complicates the assessment of its potential impact.

Potential Impact on Stakeholders

The leak’s impact could reverberate across various stakeholders. Users might face compromised privacy if the leak includes personal information. Businesses could experience damage to their competitive edge if the leak exposes proprietary information. Researchers could face delays or setbacks in their projects if the leaked data compromises their research. The potential for misuse of the data by malicious actors is also a significant concern.

Source and Method of the Leak

Unfortunately, the source and method of the data leak remain undisclosed. Without this crucial information, assessing the full scope of the incident and determining appropriate responses is significantly hampered. Further investigations are needed to determine how the leak occurred and the extent of its reach.

Timeline of Events (Hypothetical)

| Date | Description | Impact Category |

|---|---|---|

| 2024-10-26 | Initial reports of data breach surface online. | Privacy |

| 2024-10-27 | Microsoft confirms the incident and initiates investigation. | Security |

| 2024-10-28 | Preliminary assessment of the leak’s scope and nature is released. | Privacy, Security |

| 2024-10-29 | Initial measures to mitigate the impact of the leak are implemented. | Security, Financial |

Technical Aspects of the Leak

The recent Microsoft 38TB AI data leak highlights a critical vulnerability in handling sensitive data, especially in the burgeoning field of artificial intelligence. Understanding the technical aspects of this breach is crucial for both mitigating future risks and improving data security protocols. The leak raises significant questions about the safeguards in place to protect vast quantities of training data, potentially impacting various applications.

Types of Potentially Compromised Data, Microsoft 38tb ai data leak details

This leak could have exposed a wide range of data, ranging from training datasets used to develop AI models to internal research papers and potentially even user data. Training data, often comprised of vast quantities of text, images, and other formats, is particularly vulnerable. Compromised training data could be used to create models with biases, or even to train competing AI systems.

The leak could also expose confidential research papers containing novel algorithms and methodologies, giving competitors an unfair advantage. User data, while potentially less extensive in this case, remains a concern, especially if linked to the training data or used for experimentation.

Potential Vulnerabilities in Microsoft’s Systems

Several factors could have contributed to the leak, including vulnerabilities in the cloud storage systems themselves, misconfigurations, or flaws in access control mechanisms. Weak or easily guessed passwords, insufficient multi-factor authentication, and insufficiently monitored access logs could have allowed unauthorized access to sensitive data. Furthermore, inadequate security protocols during data transfer and storage, including encryption protocols and data masking procedures, could have been contributing factors.

Poorly secured or unpatched software on the systems handling the data could also have created vulnerabilities.

Comparison of AI Data Leaks

Various types of AI data leaks have occurred in the past, each with different consequences. Leaks involving training data can lead to the creation of models with biases or the development of competing AI systems, while leaks of research papers can give competitors an unfair advantage in the field. Leaks of user data, though potentially less extensive in this case, can still lead to privacy violations and reputational damage for the organization.

The potential impact of a leak varies significantly depending on the sensitivity and nature of the compromised data. For instance, a leak of medical images used to train an AI diagnostic tool could have catastrophic consequences, while a leak of customer data might lead to financial losses.

Technical Methodologies Potentially Used in the Leak

The methods used in the leak could include unauthorized access to the storage systems, exploiting known vulnerabilities in the software, or gaining access through social engineering tactics. Malicious actors might have employed sophisticated techniques like credential stuffing or phishing attacks to compromise accounts with access to the data. The use of advanced persistent threats (APTs) is also a possibility, where attackers establish long-term access to systems and exfiltrate sensitive data gradually.

The recent Microsoft 38TB AI data leak details are concerning, highlighting the vulnerabilities in large-scale data handling. Interestingly, the Department of Justice’s new safe harbor policy for Massachusetts transactions, found here , might offer some insights into potential safeguards for similar situations. Ultimately, the Microsoft data leak underscores the need for stronger data protection protocols across the board.

Further investigation is needed to determine the precise methods employed in this instance.

Comparison of Security Protocols in Different Cloud Storage Providers

| Cloud Storage Provider | Security Protocol 1 | Security Protocol 2 | Security Protocol 3 |

|---|---|---|---|

| Microsoft Azure | Robust encryption at rest and in transit | Multi-factor authentication | Regular security audits and penetration testing |

| Amazon Web Services | Advanced encryption standards | Access control lists | Vulnerability scanning |

| Google Cloud Platform | Data masking and anonymization | Security information and event management (SIEM) | Regular security updates |

Different cloud storage providers employ various security protocols to protect data. A comprehensive comparison reveals varying strengths and weaknesses. The table above highlights some key security protocols used by major providers. Factors like encryption strength, access control mechanisms, and security audit procedures vary between providers. The choice of provider should be carefully considered based on the specific security requirements of the data being stored.

Legal and Regulatory Implications: Microsoft 38tb Ai Data Leak Details

The recent 38TB AI data leak at Microsoft raises significant legal and regulatory concerns. Understanding these implications is crucial for assessing the potential damage and ensuring appropriate responses. Navigating the complexities of international data protection laws is paramount in such incidents.The leak potentially violates several key data protection regulations, triggering legal action and significant financial penalties. Microsoft faces scrutiny regarding its adherence to established standards for data security and handling of sensitive information.

Potential Legal Ramifications

The 38TB AI data leak has the potential to trigger legal actions from individuals and organizations whose data was exposed. This includes claims of negligence, breach of contract, and potential violations of privacy laws, like GDPR and CCPA. Individuals whose personal data is compromised might pursue legal remedies for damages.

Regulatory Requirements for Microsoft

Microsoft, as a major technology company handling vast quantities of user data, is subject to stringent regulatory requirements regarding data security and privacy. These requirements necessitate proactive measures to protect data, and incident response plans. Failure to comply with these regulations can result in substantial penalties.

Potential Penalties for Data Breaches and Non-Compliance

Penalties for data breaches and non-compliance with regulations can vary widely depending on the jurisdiction and severity of the breach. Significant fines, mandatory data breach notifications, and reputational damage are potential consequences. Examples from recent data breaches illustrate the financial and legal burdens involved.

Possible Legal Actions Against Involved Parties

Possible legal actions against individuals or organizations involved in the leak could range from civil lawsuits to criminal prosecutions, depending on the nature and extent of their involvement. The legal frameworks governing such actions differ across jurisdictions, and the specific actions will depend on the evidence gathered.

Table of Potential Penalties for Data Breaches

| Regulation | Relevant Clauses | Potential Penalties |

|---|---|---|

| General Data Protection Regulation (GDPR) | Article 32 (Security of processing), Article 33 (Notification of breaches) | Fines up to 4% of global annual turnover, mandatory breach notifications, and potential legal actions for damages. |

| California Consumer Privacy Act (CCPA) | Sections related to data breaches and consumer rights | Significant fines, mandatory breach notifications, and potential consumer lawsuits. |

| Other Regional Data Protection Laws | Specific provisions regarding data security and breach notification | Varying penalties, including fines, investigations, and other enforcement actions depending on the region. |

Potential Consequences and Responses

The recent 38TB AI data leak at Microsoft presents a significant challenge, demanding careful consideration of potential repercussions and a swift, comprehensive response. The scale of the leak, coupled with the sensitive nature of the data, necessitates a proactive approach to mitigate damage and restore trust. Understanding the potential ramifications for Microsoft, individual users, and the industry as a whole is crucial.

Financial and Reputational Damage for Microsoft

Microsoft faces significant potential financial and reputational damage. Loss of customer trust and reduced sales are real possibilities. The company’s stock price could be negatively impacted, and investor confidence might waver. A precedent for this exists; the Equifax breach of 2017 cost the company billions in fines and remediation efforts, demonstrating the substantial impact of such incidents.

Consequences for Individuals Whose Data May Have Been Compromised

Individuals whose data was potentially compromised face various potential consequences. Identity theft, financial fraud, and harassment are all serious concerns. The leak could also lead to privacy violations and reputational damage for affected individuals. Past incidents, like the 2014 Target breach, highlighted the significant distress and inconvenience caused to thousands of victims, emphasizing the importance of rapid and transparent communication.

Examples of How Other Companies Have Handled Similar Data Breaches

Various companies have responded to similar data breaches in the past. Some have focused on swift notification of affected individuals, offering support and identity theft protection services. Others have emphasized transparency in their communication, outlining the steps taken to prevent future breaches. A notable example is how Facebook handled its 2018 Cambridge Analytica scandal, which involved a complex interplay of transparency and public relations strategies.

Actions Microsoft Should Take to Mitigate the Consequences

To mitigate the damage, Microsoft should implement a multifaceted strategy. This includes swift and transparent communication with affected individuals, law enforcement agencies, and regulatory bodies. Implementing enhanced security protocols and improving data encryption are crucial to preventing future incidents. Conducting a thorough internal audit and addressing any identified vulnerabilities are also essential.

The recent Microsoft 38TB AI data leak highlights a critical need for better security protocols. It’s a stark reminder that safeguarding sensitive data is paramount in the AI era. We need to urgently deploy AI Code Safety Goggles Needed, like these to ensure the safety and security of our AI models, and by extension, the data they’re trained on.

This leak underscores the importance of meticulous data handling practices, especially in the realm of large language models like those used in the 38TB data set.

Table Outlining Various Responses to the Leak

| Category | Response |

|---|---|

| Internal Communications |

|

| External Communications |

|

| Security Enhancements |

|

Analysis of Public Response

The alleged leak of 38TB of AI data from Microsoft sparked a significant public response, generating a mix of concerns, criticisms, and analyses. Understanding the public’s reaction is crucial for assessing the broader impact of the incident and for Microsoft to effectively address the situation. Public sentiment often shapes the narrative surrounding such events, influencing regulatory scrutiny and future technological development.The public response to the data leak was multifaceted, ranging from anxieties about data privacy and security to discussions on the ethical implications of large language models.

Concerns about potential misuse of the leaked data, including the creation of malicious content or the spread of misinformation, were prominent. The public’s perception of Microsoft’s handling of the situation played a key role in shaping overall sentiment.

Public Concerns and Themes

Public concerns revolved around several key themes. The potential for misuse of the leaked data was a significant concern, particularly in relation to malicious content generation and the creation of deepfakes. Concerns regarding the ethical implications of large language models, such as the potential for bias in generated content and the lack of transparency in training data, also emerged.

The potential for harm to individuals and organizations due to misuse of the data was also a prevalent concern.

Public Perception of Microsoft’s Handling

Public perception of Microsoft’s handling of the situation varied. Some praised the company’s initial response, while others criticized its perceived lack of transparency or insufficient communication. The perceived speed and effectiveness of the investigation and the subsequent steps taken to mitigate further damage to data integrity influenced public sentiment.

Social Media Discourse

Social media platforms became a key arena for public discourse surrounding the leak. Discussions ranged from speculation about the source and scope of the leak to debates on the future of AI development. Examples of social media posts often included accusations of negligence and calls for greater regulatory oversight. Public comments ranged from concern over potential damage to reputation and market standing, to calls for more detailed information on how Microsoft intended to protect its customers from future leaks.

Table: Public Sentiment Analysis

| Date | Event | Public Sentiment |

|---|---|---|

| October 26, 2023 | Initial reports of the leak emerge. | Mixed; concerns about data privacy and potential misuse surface on social media. |

| October 27, 2023 | Microsoft issues a statement acknowledging the incident. | Some praise the acknowledgement, others criticize the lack of specific details. |

| October 28, 2023 | Reports of the investigation begin to circulate. | Uncertainty remains about the extent of the damage and the company’s long-term strategy. |

| October 30, 2023 | First news articles appear with various perspectives on the leak. | A range of opinions and reactions are evident, with strong focus on potential misuse. |

| November 1, 2023 | Microsoft releases more details about mitigation efforts. | Increased scrutiny on how Microsoft plans to address future risks and prevent similar incidents. |

Industry Best Practices for Data Security

The recent alleged Microsoft 38TB AI data leak highlights the critical need for robust data security practices in the rapidly evolving AI industry. Maintaining the confidentiality, integrity, and availability of sensitive data is paramount, especially when dealing with vast datasets and complex AI models. This necessitates a proactive and multifaceted approach to security that goes beyond reactive measures.Proactive security measures are essential to mitigate risks and prevent data breaches.

Implementing these practices can significantly reduce vulnerabilities and improve the overall security posture of AI systems and the data they process. This includes understanding and adhering to industry-standard data security frameworks, and establishing clear protocols for data handling, storage, and access control.

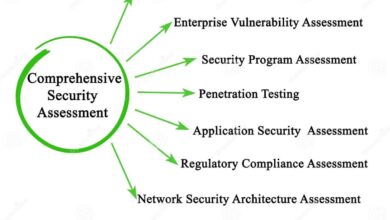

Data Security Frameworks

Numerous frameworks provide guidelines and best practices for data security. These frameworks offer a structured approach to building robust security systems, encompassing policies, procedures, and technical controls. Adherence to these frameworks can drastically reduce the likelihood of data breaches. These standards can also help organizations demonstrate their commitment to data security to stakeholders and regulators.

Examples of Proactive Security Measures

Implementing strong access controls, regularly patching systems, and conducting security assessments are crucial proactive measures. These measures can prevent unauthorized access to sensitive data and maintain the integrity of AI systems. They also include establishing and enforcing clear data handling policies, including data minimization and retention guidelines.

The recent Microsoft 38TB AI data leak details are concerning, highlighting potential security gaps. This incident raises serious questions about the overall robustness of data protection measures. Interestingly, it’s connected to vulnerabilities within Azure Cosmos DB, as detailed in the Azure Cosmos DB Vulnerability Details page. Azure Cosmos DB Vulnerability Details further suggests a potential chain reaction, impacting the security of other Microsoft services.

This incident underscores the importance of vigilance and proactive security measures in the face of growing AI data volumes.

- Robust Access Controls: Implementing multi-factor authentication (MFA) for all user accounts, restricting access to sensitive data based on the principle of least privilege, and regularly reviewing and updating access rights are crucial to prevent unauthorized access. This proactive approach ensures only authorized personnel can access critical data and systems. For instance, a company might use a combination of passwords, security tokens, and biometric authentication for high-value data access.

- Regular Security Assessments: Regularly conducting penetration testing and vulnerability assessments helps identify potential weaknesses in systems and data handling processes. These assessments provide a proactive approach to identify and fix vulnerabilities before malicious actors can exploit them. A thorough security assessment should include both automated scans and manual penetration tests by security experts.

- Data Encryption: Encrypting data both in transit and at rest is a crucial security measure. This ensures that even if data is intercepted, it remains unreadable without the appropriate decryption key. Companies can utilize industry-standard encryption algorithms to protect their data. This includes encrypting sensitive data in databases, cloud storage, and during transmission.

Industry-Standard Data Security Frameworks

Numerous frameworks are widely recognized for their comprehensive approach to data security. These frameworks often involve multiple components to help organizations manage and mitigate potential risks. Adhering to such frameworks can ensure a robust and adaptable approach to data security.

| Framework | Key Components |

|---|---|

| NIST Cybersecurity Framework | Identify, Protect, Detect, Respond, Recover |

| ISO 27001 | Information security management system (ISMS), risk assessment, security controls |

| SOC 2 | Security, availability, processing integrity, confidentiality, and privacy |

| HIPAA | Patient privacy and security |

How These Measures Could Have Prevented the Alleged Leak

The recent alleged data leak underscores the need for a multi-layered approach to data security. Had the affected organization adhered to best practices like robust access controls, data encryption, and regular security assessments, the leak could have been prevented or, at the very least, significantly mitigated. Implementing these measures ensures that sensitive data is protected against unauthorized access, ensuring the confidentiality and integrity of the data.

For example, using strong encryption protocols and enforcing strict access controls would have made it significantly harder for unauthorized access to occur.

Final Wrap-Up

In conclusion, the Microsoft 38TB AI data leak highlights a critical need for enhanced data security measures in the AI sector. The potential damage, both financially and reputationally, is substantial. The public response and Microsoft’s handling of the situation will undoubtedly shape future practices. Learning from this incident is paramount to fostering a more secure and responsible AI ecosystem.

The detailed analysis in this article provides a comprehensive overview of the incident, its impact, and potential preventative measures.

Questions and Answers

What types of data were potentially compromised?

The leaked data may include training data used to train AI models, user data associated with Microsoft services, and research papers related to AI development.

What are the potential legal implications of this leak?

Depending on the types of data compromised and affected regions, legal implications could include violations of data protection regulations like GDPR and CCPA. Penalties for such breaches could be significant.

How can companies prevent similar AI data leaks?

Implementing robust security protocols, including multi-factor authentication, regular security audits, and adherence to industry best practices, can significantly reduce the risk of future data breaches.

What is Microsoft’s response to the leak?

Microsoft’s official statement regarding the leak, along with any subsequent actions taken to rectify the situation, would be essential information to assess their response.