The Evolving Landscape of Software Development: Cognitive Load, AI Systems, and the Future of Code

The rapid proliferation of Large Language Models (LLMs) capable of generating vast amounts of code has ignited a critical re-evaluation of how software development teams understand and manage complex systems. This seismic shift in development paradigms has brought the concept of "cognitive debt" to the forefront, a metaphor that encapsulates the erosion of a team’s collective understanding of a system’s inner workings. In response to these challenges, researchers and industry leaders are proposing new frameworks for analyzing system health and rethinking the fundamental roles within software engineering organizations.

Understanding System Health Through a Multi-Layered Lens

Margaret-Anne Storey, a prominent researcher in the field of software engineering and human-computer interaction, advocates for a robust approach to understanding and mitigating the challenges posed by modern software development, particularly in the context of AI-generated code. Storey proposes considering system health through three interconnected layers. While the specifics of these three layers are detailed in her work, the core idea is to provide a structured method for diagnosing and addressing the various forms of complexity and knowledge loss that can plague software projects. This multi-layered perspective offers a more nuanced view than the simple proliferation of "debt" metaphors, which can sometimes obscure rather than clarify underlying issues.

The interaction between these layers is crucial. A decline in one area can cascade and negatively impact the others, making proactive management and a holistic understanding essential. The strategies for maintaining system health must therefore be comprehensive, addressing the unique challenges presented by each layer while acknowledging their interdependence. This framework encourages teams to move beyond reactive problem-solving and adopt a more strategic, preventative approach to system maintenance and evolution.

The Emergence of a "Third System" of Cognition: AI and Human Decision-Making

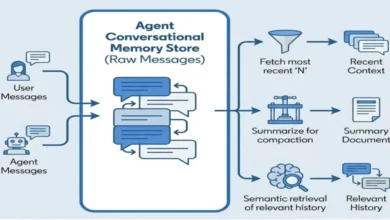

The growing influence of AI in professional workflows, particularly in coding, has prompted a deeper examination of human cognition itself. A recent paper by Shaw and Nave from the Wharton School introduces a compelling extension to Daniel Kahneman’s seminal "Thinking, Fast and Slow" model. Kahneman’s Nobel Prize-winning work posits that human thinking operates through two distinct systems: System 1, characterized by rapid, intuitive, and often unconscious processing; and System 2, which engages in deliberate, analytical, and resource-intensive deliberation.

Shaw and Nave propose the addition of a "System 3," representing artificial intelligence. This AI system is not merely a tool but an entity that can generate reasoning and solutions, potentially bypassing or influencing human cognitive processes. A key concept arising from this "Tri-System theory of cognition" is "cognitive surrender." This phenomenon is defined as an uncritical reliance on externally generated artificial reasoning, where individuals bypass their own System 2 deliberation. This is distinct from "cognitive offloading," which involves the strategic delegation of tasks to AI during a deliberate thought process.

The implications of System 3 are profound. As AI becomes more integrated into our work, the potential for uncritical acceptance of its outputs increases. This can lead to errors and oversights that might have been caught through human analytical processes. The Wharton researchers have conducted extensive experiments to validate their theory, aiming to understand how the interplay between human cognitive systems and AI influences decision-making and problem-solving in various contexts. Their findings suggest that understanding these cognitive dynamics is paramount for effectively integrating AI into human workflows without compromising critical judgment.

The Visual Language of Code: A Disconnect in Representation

The visual representation of code within technological discourse has also become a point of observation. The increasing use of angle brackets, "< >," and especially closing tags like "</>", in icons and illustrations intended to represent code generation or programming is noted as peculiar. While these symbols are fundamental to markup languages like HTML and XML, they are not typically used in this manner to enclose or represent code elements in mainstream programming languages. Most programming languages utilize different syntactical constructs, such as curly braces " " or parentheses "( )", to define blocks of code, scope, or parameters.

This visual choice suggests a perception of programming that leans heavily towards web development or data structuring, potentially overlooking the diverse syntax and paradigms prevalent in software engineering. This disconnect highlights a potential misunderstanding of what "programming" entails for many who are not directly engaged in its day-to-day practice. The symbols used to represent a concept can influence how that concept is understood, and in this case, the visual shorthand might be inadvertently creating a narrower or less accurate perception of the broader field of software development.

The True Cost of AI-Generated Code: Verification as the New Frontier

As LLMs become more adept at generating code, a fundamental question arises: if coding itself becomes increasingly automated, what then becomes the most valuable and "expensive" aspect of software development? Ajey Gore, a prominent figure in the tech industry, argues persuasively that the answer is verification. He posits that while AI agents can generate code, the true challenge lies in defining and ensuring the "correctness" of that code within complex, context-dependent real-world scenarios.

Gore illustrates this with examples from ride-sharing algorithms, where defining "correct" involves balancing multiple, often conflicting, objectives such as earnings fairness, customer wait times, and fleet utilization. These are not simple, universally defined problems but rather intricate, dynamic systems with thousands of shifting, context-dependent definitions of success. These nuanced judgments, Gore argues, are precisely what AI agents currently struggle to perform independently.

This perspective shifts the focus from the act of writing code to the act of validating its fitness for purpose. The implication is a significant reorientation of engineering teams and organizational structures. Instead of prioritizing the volume of code produced, the emphasis must shift to the rigor of verification. This involves designing robust verification systems, meticulously defining quality standards, and developing mechanisms to handle the ambiguous cases that AI cannot resolve.

Gore suggests a radical reorganization of engineering teams. A team that previously consisted of ten engineers focused on feature development might transform into a structure with fewer engineers responsible for overall design and complex problem-solving, augmented by a larger contingent dedicated to defining acceptance criteria, building test harnesses, and monitoring outcomes. The traditional stand-up meeting, which might have focused on "what did we ship?", would evolve to "what did we validate?". This fundamental shift demotes the act of "building" and elevates the act of "judging" and "validating." While potentially uncomfortable for established engineering cultures, Gore asserts that organizations that embrace this reorientation will ultimately gain a competitive advantage.

The Future of Source Code in the Age of AI

The rise of AI as a code generator inevitably sparks debate about the future of source code itself. David Cassel, writing for The New Stack, summarizes various perspectives on this evolving landscape. One emerging trend involves the development of entirely new programming languages specifically designed to be more amenable to LLM interpretation and generation. These languages may incorporate different syntactical structures or semantic designs that facilitate more effective interaction with AI models.

Conversely, a strong argument exists for the continued relevance of existing, strictly typed languages such as TypeScript and Rust. These languages, with their inherent robustness and explicit type systems, may provide a more stable and predictable environment for LLMs to operate within. The clarity and reduced ambiguity offered by such languages can potentially mitigate some of the risks associated with AI-generated code, making them a strong candidate for future development workflows.

This ongoing discussion highlights the uncertainty and rapid evolution occurring within the software development community. The outcome will likely involve a hybrid approach, where new language designs and innovative uses of existing ones coexist, all shaped by the capabilities and limitations of AI.

Weaving Abstractions: The Enduring Human Role in Software Design

Despite the increasing capabilities of LLMs, a vital role for human developers remains in the creation of meaningful abstractions and the establishment of a shared understanding of what code accomplishes. This aligns with the Domain-Driven Design (DDD) concept of a "Ubiquitous Language," a common vocabulary that bridges the gap between technical implementation and business understanding.

Last year, a conversation between Unmesh and the author explored the idea of "growing a language" with LLMs. Unmesh articulated this crucial human contribution eloquently: "Programming isn’t just typing coding syntax that computers can understand and execute; it’s shaping a solution. We slice the problem into focused pieces, bind related data and behaviour together, and—crucially—choose names that expose intent. Good names cut through complexity and turn code into a schematic everyone can follow. The most creative act is this continual weaving of names that reveal the structure of the solution that maps clearly to the problem we are trying to solve."

This perspective underscores that the core of software development transcends mere code generation. It involves the intellectual labor of modeling complex problems, devising elegant solutions, and, critically, communicating the essence of those solutions in a way that is understandable to both humans and, increasingly, to AI systems. The ability to define and refine these abstractions, to imbue code with clear intent through thoughtful naming and structural design, remains a uniquely human capability and a cornerstone of effective software engineering. The integration of LLMs will likely amplify, rather than diminish, the importance of this human-driven abstraction layer, ensuring that the generated code serves a well-defined purpose and remains comprehensible within its intended domain. The challenge ahead lies in forging a synergistic relationship between human ingenuity and artificial intelligence, where each complements the other’s strengths to build more robust, understandable, and valuable software systems.