The Shift from FLOPS to Tokens Redefining the Economics of AI Infrastructure in the Blackwell Era

The global data center landscape is undergoing its most significant structural transformation since the dawn of the internet, transitioning from passive repositories of stored information into active manufacturing hubs for artificial intelligence. In this new era of generative and agentic AI, traditional facilities that once focused solely on data retrieval and processing have evolved into "AI token factories," where the primary output is no longer just bits and bytes, but intelligence manufactured in the form of tokens. This fundamental shift in utility necessitates a total reimagining of how enterprises assess the economics of AI infrastructure, moving away from legacy hardware metrics toward a more holistic understanding of total cost of ownership (TCO) centered on the "cost per token."

For decades, the standard for evaluating computational power was defined by input-focused metrics: peak chip specifications, the cost of compute per hour, or floating-point operations per second (FLOPS) for every dollar spent. However, as AI inference becomes the dominant workload for modern enterprises, these metrics are proving to be increasingly inadequate. Optimizing for inputs while the business runs on output creates a fundamental mismatch in financial modeling. The industry is now recognizing that cost per token is the definitive metric for scalability and profitability, as it directly accounts for the convergence of hardware performance, software optimization, ecosystem support, and real-world utilization.

The Inference Iceberg: Decoding the Economics of AI

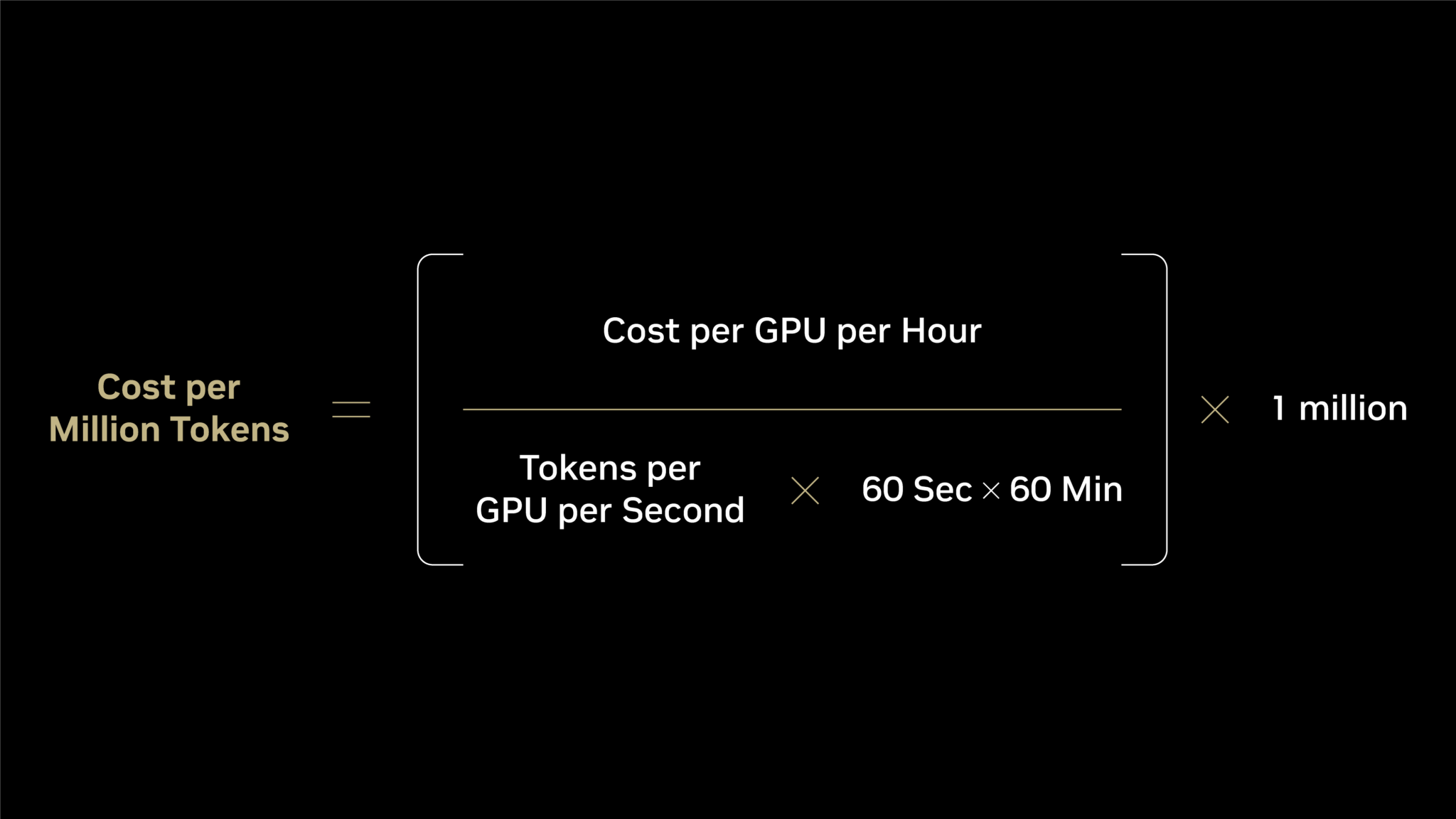

To understand the shift in AI economics, one must look at the mathematical framework of inference. The cost per million tokens is calculated by dividing the cost per GPU per hour by the total tokens delivered per GPU per second, then scaling that figure by the time and volume required. In this equation, the "numerator"—the cost per GPU per hour—is what most enterprises fixate upon. In cloud environments, this is the hourly rate; in on-premises deployments, it is the amortized cost of the hardware and electricity.

However, industry experts often refer to this as the "inference iceberg." The numerator represents only the visible tip of the iceberg above the water. Beneath the surface lies the "denominator"—the actual token output. This denominator is driven by a complex interplay of factors that determine whether an AI investment remains a cost center or becomes a revenue generator. Factors such as extreme codesign across compute, networking, memory, storage, and software determine the efficiency of the denominator. If the denominator is low, even a "cheap" GPU results in a prohibitively high cost per token, making it impossible for an enterprise to scale AI services profitably.

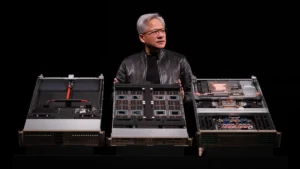

Comparative Analysis: The Leap from Hopper to Blackwell

The practical implications of this economic shift are most visible when comparing different generations of AI hardware through the lens of modern models, such as the DeepSeek-R1 reasoning model. A technical analysis reveals a massive divergence between theoretical compute costs and actual business outcomes.

When evaluating the NVIDIA Hopper (HGX H200) architecture against the newer NVIDIA Blackwell (GB300 NVL72) platform, the raw "input" costs suggest a moderate increase. The cost per GPU per hour for Blackwell is approximately double that of Hopper. On a FLOPS-per-dollar basis, Blackwell provides a 2x advantage. Under traditional metrics, this would seem like an incremental improvement.

However, when the focus shifts to the "output" metrics—the tokens—the results are transformative. Blackwell delivers approximately 6,000 tokens per second per GPU, compared to Hopper’s 90 tokens per second. This represents a 65x increase in throughput. When factored into energy efficiency, Blackwell produces 2.8 million tokens per second per megawatt, a 50x leap over Hopper’s 54,000. Consequently, the cost per million tokens on Blackwell drops to just $0.12, compared to $4.20 on Hopper. This 35x reduction in cost per token demonstrates that a more expensive hourly investment can lead to exponentially lower operational costs and higher scalability.

A Chronology of AI Infrastructure Evolution

The journey to the current "token factory" model has been decades in the making, characterized by several key milestones in computational history:

- The Pre-AI Era (1990s–2012): Data centers were primarily designed for CPU-heavy workloads, focusing on database management and web hosting. Performance was measured by Moore’s Law and CPU clock speeds.

- The Deep Learning Breakthrough (2012–2017): The emergence of AlexNet and the subsequent explosion of neural networks shifted focus toward GPUs. This era prioritized training speed, with FLOPS becoming the dominant metric.

- The Transformer Revolution (2017–2022): With the introduction of the Transformer architecture, models began to scale to billions of parameters. Large Language Models (LLMs) moved from research labs to early commercial applications.

- The Generative AI Explosion (2023–Present): The launch of ChatGPT and subsequent enterprise-grade models shifted the focus from training to inference. As millions of users began interacting with AI daily, the efficiency of token generation became the primary economic concern.

- The Era of Reasoning Models (2024 and Beyond): Models like DeepSeek-R1 and OpenAI’s o1 series require more "thinking" time and generate more tokens per query. This has cemented the "cost per token" as the only viable metric for long-term sustainability.

The Role of Full-Stack Codesign

The reason Blackwell and similar advanced platforms can achieve such dramatic reductions in token cost is not due to raw silicon power alone, but rather "extreme codesign." This approach integrates every layer of the technology stack to prevent the denominator of the inference equation from collapsing.

Software optimization plays a critical role. Open-source inference engines and libraries such as vLLM, SGLang, and NVIDIA TensorRT-LLM allow hardware to extract maximum performance from LLMs. By optimizing how tokens are sequenced and processed through the memory hierarchy, these software layers ensure that the hardware does not sit idle. Furthermore, networking technologies like InfiniBand and Spectrum-X Ethernet are essential for multi-GPU inference, ensuring that data moves between chips with minimal latency. Without this level of integration, the "token factory" would face bottlenecks that drive up the cost per token regardless of the chip’s theoretical speed.

Industry Adoption and Market Reactions

The shift toward token-based economics is already being reflected in the strategies of major cloud service providers and AI startups. Leading partners, including CoreWeave, Nebius, Nscale, and Together AI, have moved aggressively to deploy Blackwell infrastructure. These companies are not merely renting out GPUs; they are offering optimized stacks designed to provide the lowest possible token cost to their customers.

Market analysts suggest that this shift will lead to a consolidation of the AI infrastructure market. Enterprises are beginning to realize that "bargain" hardware often carries hidden costs in the form of poor software support and lower throughput. As one industry analyst from SemiAnalysis noted, "The market is moving past the ‘GPU-poor’ versus ‘GPU-rich’ phase and into the efficiency phase. It is no longer about how many GPUs you have, but how many tokens you can serve for every dollar of electricity and capital expenditure."

Broader Impact and Strategic Implications

The transition to a token-centric economy has profound implications for the global energy grid and corporate sustainability goals. Because Blackwell and future architectures deliver significantly more tokens per watt, they allow enterprises to expand their AI capabilities without a linear increase in power consumption. This "tokens-per-watt" metric is becoming as important to Chief Sustainability Officers as "cost per token" is to Chief Financial Officers.

Furthermore, the continuous optimization of the AI software ecosystem means that infrastructure acquired today will likely become more efficient over time. Unlike traditional hardware that depreciates in value and utility, NVIDIA-based infrastructure often sees its token output increase as new versions of TensorRT-LLM or vLLM are released. This creates a unique economic scenario where the cost per token continues to decline long after the initial capital investment.

For enterprises, the strategic takeaway is clear: evaluating AI infrastructure based on the price of the chip or the hourly rental rate is a legacy approach that risks long-term unprofitability. To thrive in the generative AI era, businesses must evaluate their "token factories" based on the total output of intelligence. The goal is no longer just to compute; it is to manufacture intelligence at a scale and cost that allows for the widespread deployment of agentic AI across every facet of the global economy. As the data from the Blackwell transition proves, the future of AI belongs to those who can master the denominator of the inference equation.