Hands-On Guide to Testing Agents with RAGAs and G-Eval

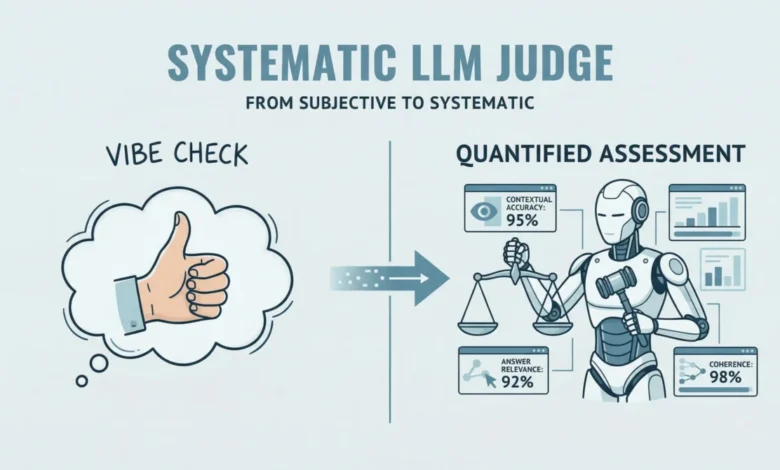

The rapid integration of Large Language Models (LLMs) into enterprise workflows has catalyzed a shift from experimental prototypes to production-ready autonomous agents. However, as these systems move into critical business functions, the industry faces a significant hurdle: the "vibe check" problem. Historically, developers relied on subjective, manual inspections to determine if an AI’s response was accurate or helpful. Today, the emergence of structured evaluation frameworks like RAGAs (Retrieval-Augmented Generation Assessment) and G-Eval is transforming how engineers quantify performance, ensuring that agentic systems are both reliable and grounded in fact.

The Evolution of LLM Evaluation Frameworks

The journey toward sophisticated AI evaluation began with the rise of Retrieval-Augmented Generation (RAG). By 2023, RAG had become the standard architecture for grounding LLMs in private or real-time data, effectively reducing hallucinations by providing the model with specific context. Yet, even with RAG, measuring the quality of the "retrieval" and the "generation" remained difficult. Traditional NLP metrics like ROUGE or BLEU, which compare text overlaps, proved insufficient for capturing the semantic nuances of modern AI responses.

In response, the research community introduced the "LLM-as-a-Judge" paradigm. This approach utilizes a more capable model (such as GPT-4) to evaluate the outputs of another model based on specific rubrics. RAGAs and G-Eval represent the pinnacle of this evolution. RAGAs focuses on the "RAG Triad"—faithfulness, answer relevance, and context precision—while G-Eval provides a flexible framework for qualitative assessments such as coherence, clarity, and tone.

Understanding the RAGAs Methodology

RAGAs is an open-source framework designed to evaluate RAG pipelines without the need for extensive human-annotated ground truth datasets. It operates on the principle that a high-quality RAG system must excel in three distinct areas.

First is Faithfulness, which measures the extent to which the generated answer is derived solely from the retrieved context. If an agent provides information not present in the source documents, the faithfulness score drops, signaling a potential hallucination. Second is Answer Relevancy, which assesses how well the response addresses the original user query. A response might be factually correct based on the context but fail to answer the user’s specific question. Finally, Context Precision evaluates the quality of the retrieval engine itself, ensuring that the most relevant information is ranked highest in the provided context.

The transition from simple RAG to agentic workflows has expanded the scope of RAGAs. Agents do not merely retrieve and summarize; they reason, plan, and execute tools. Consequently, evaluation must now account for the logic of the "agentic loop," necessitating a combination of RAGAs with more flexible qualitative frameworks like G-Eval.

Chronology of AI Testing Standards

The timeline of AI evaluation has moved at a breakneck pace over the last twenty-four months. In early 2023, the primary concern for developers was model latency and token cost. By mid-2023, as RAG became ubiquitous, the focus shifted to "grounding"—ensuring the model didn’t invent facts. This led to the release of RAGAs by the Exploding Gradients team.

By late 2023 and early 2024, the industry recognized that "accuracy" was not the only metric that mattered. Enterprises required agents that were professional, coherent, and followed specific brand guidelines. This gave rise to G-Eval, a framework that uses Chain-of-Thought (CoT) reasoning to score model outputs against human-defined criteria. Today, platforms like DeepEval have synthesized these various metrics into unified testing sandboxes, allowing for continuous integration and continuous deployment (CI/CD) of AI agents.

Implementation: A Practical Workflow for AI Engineers

To implement a robust evaluation pipeline, developers typically follow a structured technical workflow. This involves setting up an environment capable of simulating agent interactions and then applying both quantitative and qualitative metrics.

The process begins with the creation of a "mock" agent. In a production environment, this agent would be connected to a vector database (like Pinecone or Milvus) and a set of tools (like a web search or a SQL executor). For testing purposes, a simplified version is used to generate responses to a set of standardized queries.

Once the agent generates a response, the data is structured into a format compatible with evaluation libraries. Using the Hugging Face Dataset library is a common industry practice, as it allows for the efficient handling of large-scale test cases. The RAGAs framework then processes these test cases, comparing the "question," the "contexts" retrieved, and the "answer" generated.

For example, in a scenario where a user asks about a company’s password reset policy, RAGAs will verify if the agent’s instructions match the internal security documentation provided in the context. If the agent suggests a step not found in the documents, the "faithfulness" score is penalized.

Qualitative Assessment with G-Eval and DeepEval

While RAGAs handles factual grounding, G-Eval addresses the "soft" metrics of communication. Using DeepEval, developers can define custom metrics such as "Coherence" or "Professionalism."

G-Eval works by providing the "judge" LLM with a prompt that includes the evaluation criteria and a scoring rubric (usually 1 to 10). The judge model then outputs a score and, crucially, the reasoning behind that score. This "Reasoning" component is vital for developers; it transforms a numerical value into actionable feedback, allowing engineers to refine system prompts or retrieval strategies.

For instance, a G-Eval test case might look for "logical structure." If an agent provides a correct answer but presents the steps in a disorganized fashion, G-Eval will identify the lack of coherence, providing a justification such as: "The output mentions the final step before the initial setup, making it difficult for the user to follow."

Supporting Data: The Impact of Automated Evaluation

Industry data suggests that the move toward automated evaluation is not just a trend but a necessity for scaling. According to recent benchmarks in AI observability, manual evaluation of LLM outputs can take a human reviewer between 2 and 5 minutes per response. In contrast, an automated framework like RAGAs can evaluate thousands of responses in the time it takes to run a standard software build.

Furthermore, research into "hallucination rates" shows that RAG systems without structured evaluation often suffer from a 15-25% hallucination rate in complex reasoning tasks. By implementing RAGAs-based testing, development teams have reported reducing these rates to below 5% by identifying and fixing weaknesses in the retrieval chain or the system prompt.

Industry Reactions and Expert Perspectives

The shift toward frameworks like RAGAs and DeepEval has drawn significant attention from the AI engineering community. Leading practitioners argue that these tools represent the "unit testing" of the 21st century.

"In traditional software, we test for expected inputs and outputs. In AI, the outputs are probabilistic," says one senior AI architect. "RAGAs gives us a way to apply engineering rigor to a non-deterministic system. It’s the difference between hoping your model works and knowing it does."

However, some experts caution against over-reliance on "LLM-as-a-Judge." There is a known risk of "LLM bias," where a judge model might favor responses that mimic its own writing style. To mitigate this, the industry is increasingly moving toward a "hybrid evaluation" model, combining automated scores with periodic human "spot checks" to ensure the judge model remains aligned with human values.

Broader Impact and Future Implications

The implications of standardized LLM testing extend beyond technical performance. As global regulations like the EU AI Act begin to take shape, the ability to provide a "transparency report" on an AI system’s accuracy and bias will become a legal requirement for many companies. Frameworks that provide documented scores for faithfulness and relevancy will be essential for regulatory compliance.

Moreover, as we move toward "Agentic AI"—where models take actions on behalf of users, such as booking flights or managing financial portfolios—the stakes of failure increase. In these scenarios, evaluation is not just about quality; it is about safety. A failure in faithfulness in a medical or financial agent could have real-world consequences.

In conclusion, the integration of RAGAs and G-Eval into the development lifecycle marks a maturation of the AI field. By moving away from subjective assessments and toward a structured, data-driven approach, developers can build agents that are not only intelligent but also trustworthy and reliable. As these tools continue to evolve, they will provide the foundation for the next generation of autonomous digital assistants, capable of navigating complex tasks with a level of precision previously reserved for human experts.