Handling Race Conditions in Multi-Agent Orchestration

The rapid evolution of artificial intelligence has shifted the industry focus from isolated large language model (LLM) prompts to sophisticated multi-agent systems (MAS). In these environments, multiple autonomous agents work in parallel to solve complex tasks, ranging from software development to automated financial analysis. However, this shift toward parallel execution has reintroduced one of the most persistent and damaging challenges in distributed computing: the race condition. As organizations increasingly deploy agentic workflows into production, the ability to identify, understand, and mitigate these concurrency errors has become a prerequisite for system reliability and data integrity.

The Mechanics of Concurrency in Agentic Workflows

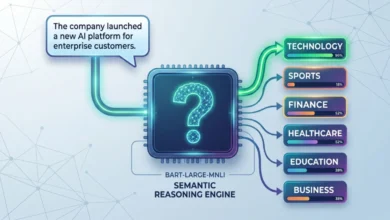

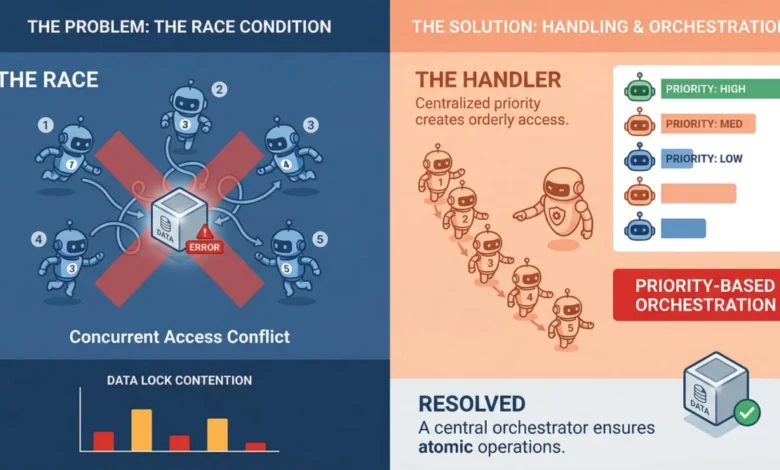

A race condition occurs when the final outcome of a process depends on the specific sequence or timing of uncontrollable events. In the context of multi-agent orchestration, this typically happens when two or more agents attempt to read, modify, or write to a shared resource—such as a database, a memory store, or a task queue—at the same time. Because these agents often operate asynchronously, the order in which they complete their tasks is non-deterministic.

Unlike traditional software bugs that trigger immediate error codes, race conditions in AI systems are frequently "silent." An agent may read a document, while a millisecond later, a second agent updates that same document. When the first agent finally writes its changes, it unknowingly overwrites the second agent’s work with stale data. To the orchestrator, the operation appears successful, but the underlying data is compromised. In machine learning pipelines, where agents often work on mutable shared objects like vector databases or tool output caches, these points of contention become critical vulnerabilities.

Chronology of a Race Condition: A Failure Sequence

To understand the practical implications of these failures, it is necessary to examine the timeline of a typical race condition within a multi-agent environment. Consider a scenario where two agents, Agent A and Agent B, are tasked with updating a shared inventory count in a retail automation system.

- T-0ms: The shared inventory counter is set to 10 units.

- T-10ms: Agent A receives a request to process a sale of 1 unit. It reads the current state (10).

- T-15ms: Agent B receives a separate request to process a sale of 1 unit. It also reads the current state (10) because Agent A has not yet written its update.

- T-50ms: Agent A calculates the new value (10 – 1 = 9).

- T-55ms: Agent B calculates the new value (10 – 1 = 9).

- T-100ms: Agent A writes the value "9" to the database.

- T-105ms: Agent B writes the value "9" to the database.

In this sequence, two items were sold, but the inventory only decreased by one. This "lost update" problem is the quintessential race condition. In an enterprise setting, such discrepancies can lead to significant financial loss, supply chain synchronization errors, and a total breakdown of trust in the autonomous system.

Supporting Data and the Rise of Multi-Agent Frameworks

The urgency of addressing race conditions is underscored by the explosive growth of the AI agent market. According to recent industry reports, the global autonomous AI agent market is projected to grow from approximately $5 billion in 2023 to over $29 billion by 2028, representing a compound annual growth rate (CAGR) of over 40%. This growth is driven by the adoption of frameworks such as LangChain’s LangGraph, Microsoft’s AutoGen, and CrewAI, all of which emphasize the orchestration of multiple specialized agents.

However, as these frameworks gain popularity, the complexity of managing their interactions increases. Traditional concurrent programming has decades of established tooling, including mutexes, semaphores, and atomic operations. Multi-agent LLM systems, by contrast, are often built on top of high-level async frameworks and message brokers that abstract away these low-level controls. This abstraction, while beneficial for rapid development, often obscures the underlying risks of resource contention.

Strategies for Mitigation: Locking and Queuing

Engineering teams are currently utilizing three primary architectural patterns to prevent race conditions: locking, queuing, and event-driven design.

Locking Mechanisms:

The most direct approach to handling shared resource contention is through locking. This can be categorized into two types:

- Pessimistic Locking: This reserves a resource the moment an agent begins its task, preventing any other agent from accessing it until the process is complete. While highly secure, it can significantly reduce system throughput by creating bottlenecks.

- Optimistic Locking: This allows agents to work simultaneously but includes a version check. Each agent reads a version tag alongside the data. If the version has changed by the time the agent attempts to write its update, the system rejects the write, forcing the agent to retry with the updated data.

Queuing and Serialization:

Rather than allowing multiple agents to poll a shared task list or database directly, sophisticated orchestrators utilize centralized queues. Systems like Redis Streams or RabbitMQ act as serialization points. By pushing tasks into a queue and allowing agents to consume them one at a time, the "race" is removed from the equation. The queue ensures that even if a thousand agents are ready to work, the access to the shared state remains orderly.

Event-Driven Architectures:

Modern MAS design is moving toward event-driven patterns. In this model, agents do not constantly check a shared state; instead, they react to specific events emitted by other agents. This creates a "looser coupling" between agents, naturally reducing the window of time where two agents might attempt to modify the same resource concurrently.

The Role of Idempotency in System Resilience

Even with robust locking and queuing, distributed systems are prone to network hiccups and timeouts. When an agent fails to receive a confirmation of a successful write, its default behavior is often to retry. Without idempotency, these retries can lead to duplicate entries or compounding errors.

Idempotency is the property where an operation can be applied multiple times without changing the result beyond the initial application. For AI agents, this involves assigning a unique operation ID to every task. If an agent retries a task that was actually successful the first time, the system recognizes the unique ID and ignores the duplicate request. Software architects emphasize that building idempotency into the agent level from the start is far more effective than attempting to retrofit it into a failing system.

Analysis of Implications: The Shift to "Agentic Reliability"

The challenge of race conditions represents a maturing of the AI field. We are moving away from the "magic" of LLMs and toward the rigorous engineering requirements of "Agentic Reliability." Industry experts note that the non-deterministic nature of LLMs—where one agent might take 200 milliseconds to respond while another takes 5 seconds—makes traditional timing-based fixes obsolete.

The implications of these errors are particularly severe in "human-in-the-loop" systems. If an AI agent presents a user with data that is currently being modified by another agent, the user may make decisions based on a reality that no longer exists. This has led to a renewed interest in property-based testing. Unlike deterministic unit tests, property-based testing defines "invariants"—rules that must always remain true regardless of execution order. By running randomized simulations that attempt to violate these rules, developers can surface subtle consistency issues that would otherwise only appear under heavy production loads.

Broader Impact on Enterprise AI Adoption

As the industry moves toward autonomous agents capable of executing financial transactions, modifying legal documents, and managing industrial hardware, the margin for error disappears. A race condition in a chatbot is a minor annoyance; a race condition in an autonomous trading agent or a medical diagnostic coordinator is a catastrophe.

The move toward more resilient multi-agent orchestration will likely see a convergence of traditional distributed systems engineering and modern AI development. We can expect future orchestration frameworks to provide "out-of-the-box" support for atomic operations and distributed locking, reducing the burden on individual developers to implement these complex safety measures manually.

Ultimately, handling race conditions is not merely a technical hurdle but a foundational requirement for the next generation of automation. The systems that succeed in the marketplace will not necessarily be the ones with the most "intelligent" agents, but the ones that provide the most predictable and reliable outcomes in a chaotic, parallel-processing world. By assuming that concurrency will cause problems and planning for that chaos by default, developers can build multi-agent systems that are truly production-ready.