NVIDIA Adobe and WPP Expand Strategic Collaboration to Revolutionize Enterprise Marketing with Agentic AI and Secure Governance

The landscape of global enterprise marketing is undergoing a fundamental shift as artificial intelligence transitions from a tool for content generation to an autonomous force capable of orchestrating complex workflows. NVIDIA has announced an expanded strategic collaboration with Adobe and WPP, aimed at embedding "agentic AI" at the core of enterprise marketing operations. This partnership integrates NVIDIA’s accelerated computing and software stack with Adobe’s creative and customer experience platforms and WPP’s global marketing expertise to automate the creation, production, and activation of content at an unprecedented scale. Unlike traditional generative AI, which requires constant human prompting, agentic AI systems are designed to plan, execute, and refine multi-step tasks independently, providing a solution to the surging demand for hyper-personalized customer experiences across millions of unique audience segments.

The Shift Toward Agentic AI in Enterprise Environments

The evolution of artificial intelligence in the corporate sector has moved through several distinct phases. Initially, AI was utilized for predictive analytics and data sorting. The rise of Large Language Models (LLMs) introduced generative AI, allowing users to create text and images through natural language prompts. However, the next frontier—agentic AI—represents a shift toward autonomy. These AI agents do not merely respond to queries; they act as "coworkers" that can navigate software interfaces, tap into sensitive data repositories, and trigger actions across a marketing technology stack.

For global brands, this capability addresses a critical bottleneck. As digital channels multiply, the requirement for personalized content has outpaced the capacity of human creative teams. A global retailer, for instance, may need to deliver a specific offer, image, copy, and price point across millions of product and audience combinations. Traditionally, updating such a massive matrix of content would take months of manual labor and cross-departmental coordination. With the integration of agentic AI, these updates can be executed in minutes, ensuring that campaigns remain "always on" and perpetually relevant to the consumer’s immediate context.

A Three-Pillar Synergy: NVIDIA, Adobe, and WPP

The collaboration leverages the specialized strengths of three industry leaders to create a comprehensive ecosystem for agentic marketing.

NVIDIA provides the underlying infrastructure and specialized AI software. This includes NVIDIA Nemotron open models, which are optimized for high-performance enterprise tasks, and the NVIDIA Agent Toolkit, a suite of tools designed to help developers build and deploy autonomous agents. Central to this stack is the NVIDIA OpenShell secure runtime, a specialized environment that ensures AI agents operate within strictly defined boundaries.

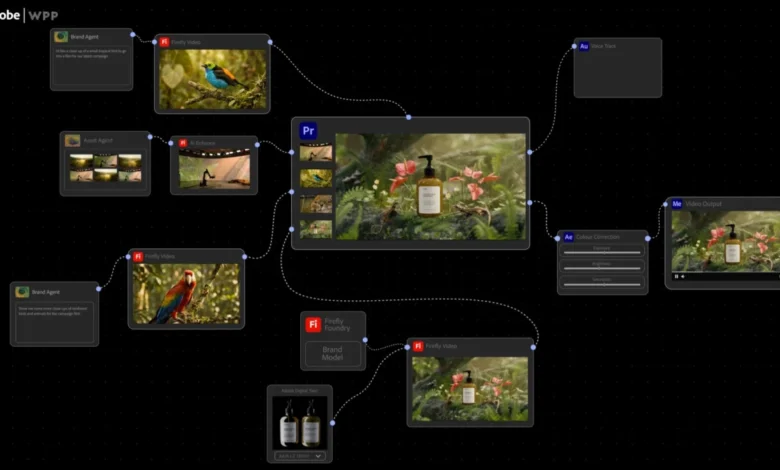

Adobe contributes its industry-standard Creative Cloud and Experience Cloud platforms. A centerpiece of this collaboration is the new Adobe CX Enterprise Coworker. This agentic tool is designed to assist marketing teams in orchestrating customer journeys, utilizing Adobe’s deep data insights to make real-time decisions about content delivery. By integrating NVIDIA’s technology, Adobe’s platforms gain the computational power and security frameworks necessary to handle complex, automated workflows that involve sensitive enterprise data.

WPP, the world’s largest marketing services firm, provides the bridge between technology and implementation. With its vast experience managing the brands of Fortune 500 companies, WPP ensures that the AI systems are tuned to the practical realities of global advertising. WPP’s role involves utilizing these AI agents to drive performance intelligence, ensuring that every piece of content generated is not only creative but also optimized for specific business outcomes, such as conversion rates or brand sentiment.

Technical Foundation: Secure Runtimes and Policy Management

One of the primary hurdles to the adoption of autonomous AI in the enterprise is the "black box" problem—the risk that an AI might act in ways that are unpredictable, non-compliant, or damaging to the brand. To mitigate this, the collaboration emphasizes "governed environments."

Powered by the NVIDIA OpenShell runtime, AI agents operate within a secure, isolated environment. This setup provides enterprise-grade control and auditability across the entire marketing lifecycle. Unlike traditional AI systems where governance is often an afterthought, OpenShell allows for verifiable policy management. It moves beyond simply asking "What policy is in place?" to providing a definitive answer to "What can the agent do?"

This level of control allows enterprises to enforce "rules of engagement." For example, an agent can be programmed with strict boundaries regarding legal compliance in different geographic regions, pricing limits, and brand-specific aesthetic guidelines. If an agent attempts to generate a promotion that violates a regional regulation or uses an unapproved logo, the OpenShell environment prevents the action from being executed. This "guardrail" system ensures that speed does not come at the expense of safety or brand integrity.

Chronology of a Growing Partnership

The current expansion is the latest milestone in a multi-year trajectory of collaboration between these entities. In May 2023, NVIDIA and WPP announced they were developing a content engine that utilized NVIDIA Omniverse and generative AI to enable creative teams to produce high-quality commercial content faster and more efficiently.

By early 2024, the focus shifted toward integrating Adobe’s Firefly (generative AI for images) into WPP’s workflows. The announcement at the current Adobe Summit marks the transition from "generative tools" to "agentic systems." The timeline reflects the rapid maturation of AI technology:

- Phase 1 (2022-2023): Infrastructure and foundational model development.

- Phase 2 (2023-2024): Integration of generative AI into creative tools (Firefly, Omniverse).

- Phase 3 (2024-Present): Deployment of agentic AI coworkers and secure runtimes for autonomous orchestration.

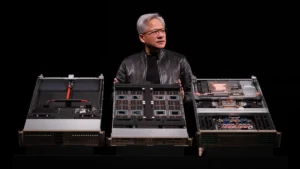

A live demonstration of the CX Enterprise Coworker, powered by the NVIDIA Agent Toolkit and OpenShell, is scheduled for the Adobe Summit day-two keynote. This presentation follows a high-profile fireside chat between NVIDIA founder and CEO Jensen Huang and Adobe CEO Shantanu Narayen, signaling the strategic importance of this partnership at the highest executive levels.

Supporting Data and Market Context

The move toward agentic AI is backed by significant shifts in the global economy and marketing spend. According to industry data, global advertising spend is expected to exceed $1 trillion by 2026. However, the cost of content production has risen sharply as brands strive to maintain a presence across social media, streaming, web, and physical retail.

Research from Gartner suggests that by 2025, 30% of outbound marketing messages from large organizations will be synthetically generated, up from less than 2% in 2022. Furthermore, a study by IDC indicates that enterprises investing in AI-driven automation see an average productivity increase of 20% to 30% in their marketing operations. By moving to an agentic model, NVIDIA, Adobe, and WPP are positioning themselves to capture the value of this increased efficiency.

The use of "OpenShell" and "Nemotron" also highlights a shift toward "Open-Model" strategies. By providing open-weight models that can be customized and run locally or in private clouds, NVIDIA allows enterprises to maintain "data sovereignty"—keeping their most sensitive customer data within their own trust boundaries rather than sending it to third-party AI providers.

Analysis of Implications for the Creative Workforce

The introduction of "coworkers" in the form of AI agents naturally raises questions about the future of human creative and marketing roles. However, the narrative presented by NVIDIA, Adobe, and WPP is one of "augmentation" rather than "replacement."

In the traditional workflow, creative professionals often spend a majority of their time on repetitive, low-value tasks—such as resizing assets for different social media platforms or adjusting color palettes for different markets. Agentic AI is designed to absorb these mechanical tasks. This allows human marketers to focus on high-level strategy, brand storytelling, and complex emotional resonance—areas where AI still lacks human-level nuance.

The broader implication is a shift in the required skill set for marketing professionals. The "marketer of the future" will likely need to be a "conductor" of AI agents, defining the policies, goals, and creative direction that the agents then execute. The role of "governance" becomes a creative act in itself, as defining the boundaries for an AI system determines the brand’s digital personality and operational limits.

Conclusion: A New Foundation for Marketing

The collaboration between NVIDIA, Adobe, and WPP represents more than just a technical upgrade; it is the establishment of a new foundation for how brands interact with consumers. By combining creative intelligence (Adobe), performance intelligence (WPP), and secure infrastructure (NVIDIA), the partnership addresses the three most significant challenges of the AI era: scale, speed, and trust.

As these agentic systems become more integrated into the enterprise, the "always-on" marketing model will become the standard. Brands will no longer be limited by the speed of manual production but by the clarity of their strategy and the robustness of their governance. The result is an ecosystem where personalized, relevant, and brand-compliant content is delivered at a global scale, fundamentally altering the relationship between technology, creators, and the audience.