The Download Cyberscammers Banking Bypasses and Carbon Removal Troubles

The landscape of global technology is currently undergoing a series of volatile shifts, ranging from the sophisticated exploitation of financial security protocols to a potential existential crisis in the carbon removal market. As digital infrastructure becomes increasingly central to both the global economy and international security, new vulnerabilities are emerging that challenge existing regulatory frameworks and corporate strategies. Investigations into illicit hacking services on Telegram have revealed that "Know Your Customer" (KYC) protocols, once considered the gold standard for banking security, are being systematically bypassed by scammers using low-cost digital tools. Simultaneously, the nascent carbon removal industry is reeling from news that Microsoft, its primary benefactor, has paused its purchasing programs, raising questions about the viability of private-sector-led climate solutions. These developments, alongside reports of the first robotic captures in modern warfare and the rapid advancement of brain-computer interfaces, signal a period of profound transition in how humanity interacts with and secures its technological tools.

The Erosion of Digital Identity: Telegram-Driven Banking Frauds

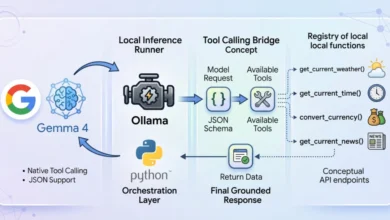

The security of the global banking system relies heavily on the ability to verify that a person is who they claim to be. This process, known as "Know Your Customer" (KYC), often involves facial recognition and "liveness" checks—tests designed to ensure that the person interacting with an app is a living human being and not a static photo or a deepfake. However, recent investigations by MIT Technology Review have uncovered a burgeoning black market on the messaging app Telegram that provides tools specifically designed to defeat these safeguards.

In one documented instance within a money-laundering facility in Cambodia, an operative successfully bypassed a banking app’s liveness check by using illicit hacking services. Despite the app requesting a real-time video verification, the scammer was able to gain access to a 30-something man’s account by holding up a static image of a woman who did not match the account holder’s profile. After a mere 90 seconds of manipulation using these Telegram-sourced tools, the security protocol was defeated.

The investigation identified at least 22 distinct channels and groups on Telegram dedicated to advertising these bypass services. These tools are often sold as software-as-a-service (SaaS) models, allowing even low-skilled criminals to perform sophisticated identity theft. The implications for the financial sector are severe. As banks move toward fully digital onboarding and transaction verification, the ability for criminals to spoof biometric data undermines the foundational trust of digital finance. Security experts suggest that the industry must now pivot toward multi-factor authentication that does not rely solely on facial biometrics, as generative AI and specialized bypass scripts continue to evolve faster than the defensive algorithms.

The Carbon Removal Market: A Fragile Ecosystem Under Stress

For years, the burgeoning carbon removal industry has operated under a unique market dynamic: a single entity, Microsoft, has acted as the primary driver of demand. Recent reports indicating that Microsoft is pausing its carbon removal purchases have sent shockwaves through the sector. Microsoft currently accounts for approximately 80% of all contracted carbon removal worldwide. Their involvement has been the primary signal to investors that there is a viable long-term market for technologies that can pull carbon dioxide directly from the atmosphere or sequester it through biological means.

The pause in purchasing is viewed by many as a "bombshell" that exposes the fragility of the voluntary carbon market. If the primary buyer retreats, the capital required to scale these expensive technologies—such as Direct Air Capture (DAC)—may dry up. This comes at a critical time when the Intergovernmental Panel on Climate Change (IPCC) has stated that carbon removal will be necessary to meet the 1.5-degree Celsius warming targets set by the Paris Agreement.

Industry analysts suggest that Microsoft’s pause may be a strategic realignment rather than a total withdrawal. The company may be seeking to verify the efficacy of existing contracts or waiting for more robust verification standards to emerge. However, the immediate reaction has been one of fear. Without a diversified pool of corporate buyers, the carbon removal industry remains at the mercy of a few Big Tech firms, highlighting the need for government subsidies and more stringent regulatory requirements for carbon offsets to stabilize the market.

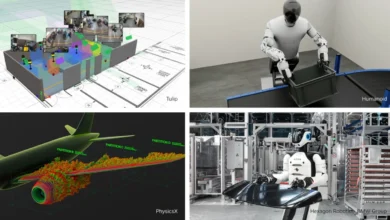

The Dawn of Autonomous Capture: Robotics on the Battlefield

The conflict in Ukraine has long been a testing ground for new military technologies, but recent reports suggest a historic milestone has been reached: the first instance of Russian troops surrendering to fully automated robotic units. Ukrainian officials claim that a coordinated attack, managed by autonomous systems rather than direct human piloting, successfully captured army positions.

While drones have been used for surveillance and kinetic strikes for years, the transition to autonomous systems that can manage the complexities of ground capture represents a paradigm shift in warfare. It suggests that the "kill chain"—the process of identifying, tracking, and engaging a target—is becoming increasingly compressed and automated.

This development aligns with a broader trend across Europe and the West. Defense ministries are increasingly investing in autonomous "swarm" technologies and automated defense systems. However, the rise of robots capable of taking prisoners raises significant ethical and legal questions. The Geneva Convention, which governs the treatment of prisoners of war, was written with human-to-human interaction in mind. The prospect of "automated kill chains" and robotic captors necessitates a re-evaluation of international humanitarian law to ensure that machines are programmed to respect the rights of surrendering combatants.

Cognitive Frontiers: BCIs and Virtual Navigation

Parallel to the advancements in robotics is the rapid progress in brain-computer interfaces (BCIs). Recent research has demonstrated that monkeys equipped with BCI implants are now capable of navigating complex virtual worlds using only their thoughts. This research, published in New Scientist, involves electrodes implanted in the motor cortex that translate neural signals into digital commands.

The success of these trials is a precursor to human applications, particularly for individuals with severe paralysis or neurodegenerative diseases. By bypassing damaged spinal cords or nerves, BCIs could allow patients to control prosthetic limbs or digital interfaces with the same fluidity as a natural limb. However, the technology still faces a critical test in human trials: the longevity of the implants. The human body’s immune system often treats these electrodes as foreign objects, leading to scarring that can degrade the signal over time. Companies like Neuralink and Blackrock Neurotech are currently racing to develop "biocompatible" materials that can remain functional within the human brain for decades.

The Infrastructure Crisis: Data Centers and Public Backlash

As the demand for AI and cloud computing explodes, the physical infrastructure required to support these technologies is facing unprecedented public opposition. In the United States, particularly in "Data Center Alley" in Northern Virginia, protests and litigation are increasingly blocking new projects. Residents are raising concerns over the immense energy consumption of these facilities, their impact on local water supplies for cooling, and the noise pollution generated by massive cooling fans.

The backlash is encapsulated by the "internet diet" movement, where citizens argue that the environmental and social costs of maintaining massive data centers outweigh the benefits of corporate digital expansion. Sylvia Whitt, a 78-year-old retiree involved in the protests, noted that the public should not have to bear the environmental burden of corporate "internet stuff."

This friction has forced tech giants to look for radical solutions. Some companies are exploring the possibility of placing data centers in space, where solar energy is abundant and cooling is less of a terrestrial environmental concern. Others are looking at underwater data centers or facilities powered by small modular nuclear reactors (SMRs).

Global Tech Pulse: Nuclear Moons and Rapid Charging

The push for new energy sources is not limited to Earth. NASA has recently announced plans to deploy nuclear reactors on the Moon. These fission systems are intended to provide a reliable power source for future lunar bases and long-duration spaceflight missions. Unlike solar power, which is interrupted by the 14-day lunar night, nuclear power offers a continuous energy supply, which is essential for life support and scientific operations.

Back on Earth, the electric vehicle (EV) market is seeing a breakthrough in charging technology. The Chinese automaker BYD has begun rolling out five-minute EV charging capabilities. This development addresses one of the primary hurdles to EV adoption: "range anxiety" and the long wait times associated with traditional charging stations. If five-minute charging becomes the industry standard, it could significantly accelerate the transition away from internal combustion engines.

Furthermore, the economic impact of the AI boom is reshaping global markets. Taiwan’s stock market recently surpassed the United Kingdom’s in total value, reaching over $4 trillion. This surge is driven almost entirely by Taiwan’s dominance in the AI chip manufacturing sector, led by TSMC. As AI chips become the "new oil" of the 21st century, the geopolitical and economic importance of the Taiwan Strait continues to grow.

Conclusion: The Ethics of a Synthetic Future

As technology advances into the realms of AI-generated content and synthetic sexuality, the questions facing society are moving beyond mere technical feasibility. The rise of AI-generated pornography and the potential for "synthetic relationships" challenge traditional definitions of human interaction. Some lawmakers are attempting to use existing obscenity laws to curb this trend, but the decentralized nature of AI makes enforcement difficult.

The overarching theme of these diverse technological developments is the erosion of the boundary between the "real" and the "synthetic." Whether it is a scammer bypassing a bank with a digital mask, a robot capturing a soldier, or a monkey living in a virtual world, the reliance on digital proxies is becoming absolute. The challenge for the coming decade will be to build systems of verification, ethics, and infrastructure that can withstand the pressures of this new, highly automated reality. In the words of industry observers, the goal is no longer just to innovate, but to ensure that these innovations do not outpace our ability to govern them.