Harvard Researchers Identify Optimal Randomness Levels to Enhance Robotic Swarm Efficiency in Crowded Environments

In the rapidly evolving field of autonomous systems, a persistent challenge has hindered the deployment of large-scale robotic fleets: the paradox of density. While adding more units to a task—such as cleaning an environmental disaster site or managing a high-speed logistics warehouse—theoretically increases productivity, physical reality often dictates otherwise. Beyond a specific threshold, the limited spatial environment becomes a bottleneck. Robots begin to obstruct one another, creating localized traffic jams that cascade into system-wide stagnation. However, a groundbreaking study from Harvard University’s John A. Paulson School of Engineering and Applied Sciences (SEAS) suggests that the solution to this mechanical gridlock is not more complex coordination, but rather a calculated dose of disorder.

The research, recently published in the Proceedings of the National Academy of Sciences (PNAS), reveals that introducing a "Goldilocks zone" of randomness into robot movement patterns significantly improves the efficiency of swarms operating in tight quarters. By allowing robots to move with a controlled level of unpredictability, or "noise," researchers found that agents can navigate around one another more effectively than if they followed perfectly straight, programmed paths. This counterintuitive finding provides a new framework for designing decentralized robotic systems that mimic the self-organizing capabilities of biological entities.

The Mechanical Bottleneck: Why More is Sometimes Less

The study was born out of a fundamental question in applied mathematics and robotics: how many autonomous agents can occupy a finite space before their collective utility begins to decline? In traditional robotics, the focus is often on precision and path optimization. Engineers typically program robots to take the most direct route from point A to point B. While this works seamlessly for a single robot, it creates catastrophic failure points in high-density swarms. When multiple robots attempt to occupy the same optimal path, they collide or enter "deadlock" states where neither can move without the other moving first.

To address this, the Harvard team, led by L. Mahadevan, the Lola England de Valpine Professor of Applied Mathematics, Organismic and Evolutionary Biology, and Physics, turned to the principles of statistical mechanics. The team sought to understand if the erratic, seemingly disorganized movement seen in natural swarms—such as schools of fish or colonies of ants—offered a functional advantage that could be replicated in silicon and steel.

Methodology: From Computer Simulations to Physical Reality

The research was spearheaded by Lucy Liu, an applied mathematics Ph.D. student at SEAS, under the guidance of Senior Research Fellow Justin Werfel. The team adopted a multi-tiered approach that began with high-fidelity computer simulations before moving to physical laboratory experiments.

In the simulation phase, the researchers created environments populated by "agents"—digital representations of robots. Each agent was programmed with a simple set of instructions: move toward a designated target, and upon arrival, immediately head toward a new, randomly assigned destination. This setup mimicked the continuous, high-intensity task cycles found in automated sorting centers or search-and-rescue operations.

The critical variable in these simulations was the level of "noise" or randomness applied to the agents’ trajectories. The researchers tested three primary scenarios:

- Zero Noise (Perfect Precision): Agents moved in perfectly straight lines toward their goals. While initially fast, these agents quickly formed rigid clusters. These clusters acted as barriers, eventually leading to massive traffic jams that brought the goal attainment rate to a near-halt.

- High Noise (Maximum Randomness): Agents moved in erratic, "drunkard’s walk" patterns. While these agents rarely formed permanent traffic jams because they were constantly shifting, their lack of direction meant they took an excessively long time to reach their targets, resulting in low overall efficiency.

- The "Goldilocks Zone" (Optimal Noise): Agents moved generally toward their goals but were allowed a specific degree of lateral deviation. This slight "wiggle" allowed them to bump off one another and navigate around obstacles without losing their primary heading.

Identifying the Goldilocks Zone of Efficiency

The simulations revealed a distinct phase transition in the swarm’s behavior. The researchers found that at a specific level of movement variability, the swarm reached a peak "goal attainment rate." In this state, short-lived clusters of robots would form and then quickly dissolve, maintaining a steady, fluid-like flow across the environment.

Using the data gathered from these simulations, the Harvard team developed a series of mathematical formulas. These equations allow engineers to calculate the "sweet spot" for any given swarm based on two primary factors: the density of the robots in the space and the intended speed of the operation. This predictive model transforms what was once a trial-and-error process into a precise engineering calculation.

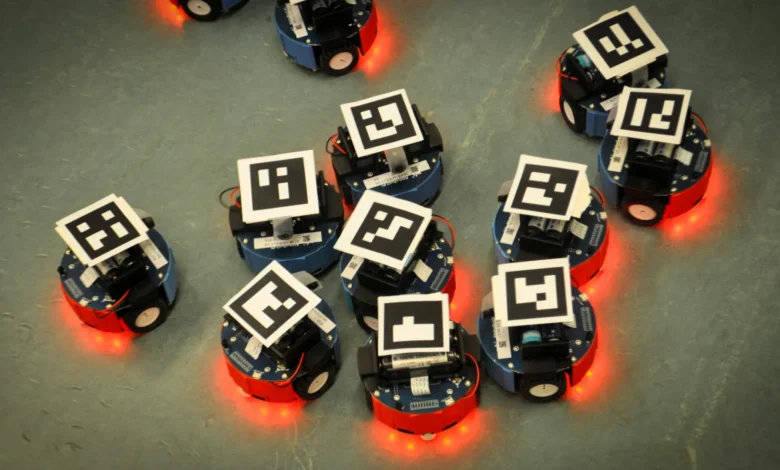

To validate the theoretical findings, the team collaborated with Federico Toschi, a physicist at Eindhoven University of Technology. Together, they conducted real-world experiments using small, wheeled robots. Each robot was equipped with a QR code, allowing an overhead camera system to track its position in real-time and provide updated coordinates. Despite the inherent friction, battery fluctuations, and mechanical lag of physical robots, the results mirrored the simulations: the robots with a programmed "wiggle" outperformed those attempting to move in rigid, straight lines.

Chronology of the Research and Development

The journey from a mathematical hypothesis to a peer-reviewed publication followed a rigorous timeline:

- Phase 1: Conceptualization (2021-2022): The team began exploring the intersection of active matter physics and swarm robotics, identifying congestion as the primary barrier to scalability.

- Phase 2: Simulation and Modeling (Late 2022): Lucy Liu developed the initial algorithms to test varying degrees of stochastic (random) movement. This phase identified the non-linear relationship between noise and efficiency.

- Phase 3: Physical Implementation (2023): Collaboration with Eindhoven University of Technology began. The team moved from the digital "perfect" environment to the "noisy" physical world, testing the theory on hardware.

- Phase 4: Data Analysis and Synthesis (Early 2024): The mathematical formulas were refined to ensure they could be applied to different robot types and environmental sizes.

- Phase 5: Publication (Late 2024): The findings were finalized and published in PNAS, providing the broader scientific community with a new toolset for swarm management.

Supporting Data and Technical Implications

The study’s data suggests that in high-density environments—where robots occupy more than 30% of the available floor space—the introduction of roughly 10% to 15% movement noise can increase the goal attainment rate by up to 40% compared to zero-noise systems.

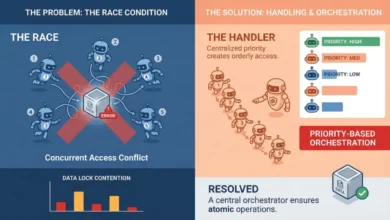

Furthermore, the research highlights a shift in how we perceive "intelligence" in robotics. Traditionally, solving congestion required "centralized intelligence," where a master computer tracks every robot and calculates non-conflicting paths for all of them. This requires immense computing power and high-speed communication. The Harvard study demonstrates that "decentralized intelligence"—relying on simple local rules and inherent variability—can achieve similar or better results with a fraction of the technological overhead.

Statements from the Research Team

Professor L. Mahadevan emphasized the biological inspiration behind the work, noting that the study bridges the gap between physics and ethology (the study of animal behavior). "Understanding how active matter, whether it is a swarm of ants, a herd of animals, or a group of robots, becomes functional and execute tasks in crowded environments using the principles of self-organization, is relevant to many questions in behavioral ecology," Mahadevan said. "Our study suggests strategies that might well be much broader than the instantiation we have focused on."

Lucy Liu, the study’s lead author, highlighted the practical safety implications. "I have long been interested in designing safer and more efficient crowded spaces," she noted. Her analysis suggests that these mathematical tools could eventually be applied to human-centric environments, such as optimizing the flow of pedestrians in subway stations or managing autonomous vehicle traffic at complex intersections.

Broader Impact and Future Applications

The implications of this research extend far beyond the laboratory. As industries increasingly rely on automation, the ability to pack more robots into a space without losing efficiency is worth billions of dollars.

1. Logistics and Warehousing:

Companies like Amazon and Ocado operate massive fulfillment centers where hundreds of robots zip across a grid. By applying the "Goldilocks" noise theory, these companies could potentially increase the density of their robotic fleets, allowing for smaller warehouse footprints or higher throughput during peak seasons.

2. Environmental Remediation:

In the event of an oil spill, a swarm of small, inexpensive autonomous skimmers could be deployed. Because these environments are often turbulent and unpredictable, a decentralized system that thrives on randomness would be far more resilient than a rigid, centrally controlled fleet.

3. Urban Planning and Traffic Flow:

The mathematical models developed by the Harvard team could assist urban planners in designing "smart" walkways or roads. By understanding how individual variability prevents "clumping," planners can design spaces that naturally discourage bottlenecks.

4. Nanomedicine:

On a microscopic scale, researchers are developing nanobots designed to deliver drugs directly to tumors. These bots must navigate the incredibly crowded and "noisy" environment of the human bloodstream. Applying the principles of optimal randomness could be key to ensuring these bots reach their targets without causing biological "traffic jams" in the capillaries.

Conclusion

The Harvard study challenges the long-held engineering dogma that precision is always the path to efficiency. By demonstrating that a little bit of "messiness" is actually the lubricant that keeps a crowded system moving, Liu, Mahadevan, and their colleagues have provided a blueprint for the next generation of autonomous swarms. As we move toward a future where robotic fleets become a common sight in our cities and factories, the ability to embrace and calculate randomness may be the very thing that keeps our high-tech world moving forward.

Funding for this research was provided by the National Science Foundation Graduate Research Fellowship Program, the Simons Foundation, and the Henri Seydoux Fund, marking a significant investment in the future of autonomous systems and applied mathematics.